A high-speed optical switch routes signals in the optical domain, using light paths rather than ordinary packet forwarding inside an electronic switch ASIC. That sounds like a small distinction until the traffic grows large enough that converting every optical signal into electronics, processing it, and converting it back into light becomes a major source of cost, power, latency, and complexity. The broader category is covered in the intelligent optical switch article.

The original version of this article reported a 2017 demonstration by Japan's National Institute of Information and Communications Technology (NICT): a 53.3 Tb/s optical switching experiment for short-reach data-center networks. The work combined spatial division multiplexing (SDM), multi-core optical fiber, and a newly developed high-speed spatial optical switch system able to operate at packet granularity.

What NICT Demonstrated

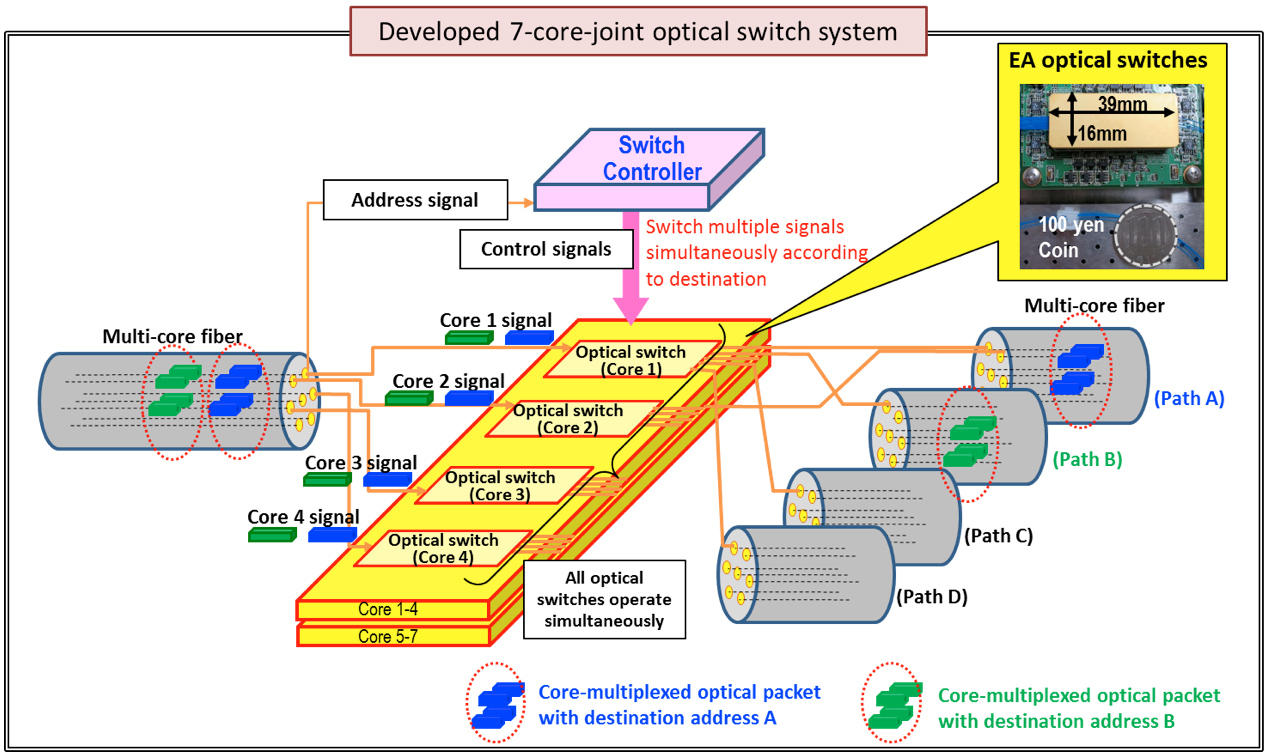

NICT developed a 7-core-joint optical switching system that could switch all cores of a 7-core multi-core fiber at the same time. The switching speed was 80 ns, fast enough for a time-slotted optical network experiment in which packet-sized bursts could be added, dropped, or passed through the node without being converted into electronic packets at the switching point.

The testbed used 64 wavelength channels modulated at 32 Gbaud with polarization-division-multiplexed QPSK, producing a nominal 53.3 Tb/s capacity. The optical paths included multi-core fiber segments of 28 km, 10 km, and 2 km. Although the experiment used seven active cores, the use of 19-core fiber segments showed the broader direction: increasing capacity not only by adding wavelengths, but also by adding spatial paths inside the same fiber cladding.

Why Spatial Division Multiplexing Matters

Traditional single-mode fiber has carried the internet astonishingly far by using better modulation, coherent detection, dense wavelength division multiplexing, stronger forward error correction, and more efficient transponders. But standard fiber is not infinitely scalable. Spatial division multiplexing adds another axis of capacity by using multiple cores or modes so several optical paths can share a physical cable structure.

Multi-core fiber is especially interesting for dense data-center and research networks because it can align many parallel optical lanes in one physical route. If an optical switch can move those cores jointly, a network can treat them as a spatial super-channel rather than as unrelated fibers. That can simplify high-capacity path setup and make packet- or burst-level optical routing more practical.

Packet Switching Versus Circuit Switching

Most deployed optical switching today is circuit-oriented. An optical circuit switch establishes a light path between endpoints and keeps it in place long enough to carry a flow, a wavelength, a test connection, or a cluster-to-cluster link. This can be highly efficient, but it does not behave like an Ethernet switch that examines every packet and forwards it independently.

Optical packet switching aims at a harder problem: moving packet-sized bursts in the optical layer. The difficulty is that light is not easily stored while a switch reads a header, resolves contention, and waits for an output. Electronics can buffer packets in memory; optical networks must use time slots, labels, scheduling, wavelength choices, deflection, or other techniques to avoid collisions. NICT's work was notable because it pushed optical switching toward packet granularity at very high aggregate capacity.

What Changed Since 2017

The basic pressure behind NICT's demonstration has intensified. Data-center traffic is increasingly east-west, moving between servers, accelerators, storage, and top-of-rack networks rather than simply entering and leaving the building. AI training clusters and high-performance computing systems have made the interconnect a first-class scaling problem.

Research has continued on nanosecond optical switching and control. A 2022 Nature Communications paper demonstrated a nanosecond optical switching and control system for data-center networks, including label control, optical flow control, and clock distribution. The reported proof-of-concept reached 43.4 ns for the overall switching and control operation and 3.1 ns data recovery without burst-mode clock-and-data-recovery receivers.

Commercial momentum has grown more strongly around optical circuit switching than packet-granularity optical switching. Lumentum's current optical circuit switch portfolio, for example, includes 64x64 and 300x300 MEMS-based platforms aimed at AI and cloud networks. These systems create transparent any-to-any optical paths and are positioned as a way to reduce optical-electrical-optical conversion, latency, and power in large deterministic fabrics. They do not solve the exact same problem as NICT's 2017 optical packet-switching demonstration, but they show that data-center optical switching has moved from laboratory curiosity toward infrastructure planning.

Why Optical Switching Is Hard

- Contention: Two optical packets may want the same output at the same time, and there is no simple optical equivalent of cheap electronic packet memory.

- Synchronization: Packet-granularity optical systems need extremely precise timing so bursts arrive when the switch is ready.

- Receiver recovery: Coherent and burst-mode receivers must lock quickly enough to handle discontinuous packets.

- Insertion loss: Switch elements, connectors, splitters, and fiber spans reduce optical power and can shrink the link budget.

- Control speed: The controller must read labels or schedules, resolve conflicts, and drive the optical hardware fast enough to matter.

- Operations: A production system needs telemetry, fault handling, security, maintenance workflows, and compatibility with existing packet networks.

Where It Fits

Ultra-high-speed optical switching is most compelling where the network has enormous bandwidth demand and enough structure to exploit direct light paths. That includes AI clusters, HPC systems, storage fabrics, data-center interconnects, carrier research networks, and optical testbeds. The likely near-term pattern is hybrid: electronic packet switches continue handling bursty, fine-grained traffic, while optical switches create high-capacity paths for large flows, scheduled exchanges, restoration, or accelerator-cluster communication.

For network architects, the question is not whether optics will replace switching logic everywhere. It is where optics can remove avoidable conversions, cabling complexity, and power draw without making the control plane fragile. The answer depends on traffic patterns, topology, failure domains, and whether the system can schedule or predict enough traffic to keep optical circuits busy.

The 2017 Result in Context

NICT's 53.3 Tb/s result remains important because it combined several hard pieces at once: coherent transmission, SDM, time-division switching, joint switching of multiple fiber cores, and packet-granularity operation. The team described future work around faster response, lower insertion loss, flatter frequency response, coherent burst-mode receivers, and higher-order modulation formats for better spectral efficiency.

That roadmap still reads like a useful checklist. The strongest future optical switches will not be judged only by raw capacity. They will also need fast control, low loss, broad spectral behavior, clean integration with packet networks, and operational tooling that data-center teams can trust at scale.

Practical Takeaways

- Optical packet switching and optical circuit switching are related, but they solve different timing and control problems.

- Spatial division multiplexing can increase capacity by adding cores or modes, not only by adding wavelengths.

- Nanosecond switching is useful only when synchronization, contention handling, and receiver recovery are solved with equal care.

- AI and HPC networks are renewing interest in optical switching because power, latency, and cabling scale are becoming harder constraints.

- Hybrid electronic/optical designs are the most realistic path for many production networks.

The 2017 NICT demonstration did not mean every data-center switch would soon become an optical packet switch. It showed something subtler and more durable: the optical layer can be made faster, more spatially dense, and more packet-aware than conventional circuit-only assumptions suggested. In 2026, that idea sits directly in the path of the biggest networking problem in computing: how to move more data between more machines without letting the interconnect consume the whole power and latency budget.