Immersive training is strongest when it improves skill transfer, safer rehearsal, and deliberate practice rather than simply making training look futuristic. The real question is whether a simulation helps people notice the right cues, make better decisions, execute the task more cleanly, and retain those gains when the headset comes off.

That is where AI has become genuinely useful. It helps turn immersive environments into simulation-based training systems with stronger computer vision, gesture recognition, automatic speech recognition, multimodal learning, telemetry, and sometimes digital twin links. Strong deployments still depend on validated scoring rubrics, instructors, and scenario designers who understand the job being rehearsed.

This update reflects the field as of March 18, 2026 and leans mainly on FAA and EASA qualification milestones, Army synthetic-training programs, DARPA ACE, current systematic reviews, randomized trials, and recent XR tutoring and feedback research. Inference: the biggest 2026 gains are coming from better adaptive feedback, more objective assessment, richer AI role-play, and wider access to high-fidelity rehearsal, not from replacing instructors with unbounded chatbots.

1. Adaptive Difficulty Modulation

Adaptive difficulty matters because skill growth usually happens in the zone between boredom and overload. AI can adjust pacing, task burden, hinting, and scenario complexity so the learner keeps practicing at an effective stretch level instead of repeating drills that are too easy or too chaotic.

The 2025 review of intelligent and robot tutoring systems describes immediate feedback, mastery progression, and real-time adaptivity as core strengths of modern tutoring architectures, and the 2025 JMIR randomized trial on immersive emergency training found that VR with automated feedback produced better long-term knowledge retention than a video seminar control. Inference: adaptive difficulty is strongest when it protects deliberate practice rather than merely turning training into a game-like difficulty slider.

2. Personalized Learning Paths

Personalization is valuable because trainees do not start at the same baseline and do not fail for the same reasons. AI can use repeated performance data to route learners toward extra repetitions, different scenarios, or more advanced modules without forcing everyone through the same sequence.

The 2025 Frontiers review and meta-analysis of VR in teacher education reported a positive moderate overall effect and highlighted VR's potential for accessible, adaptive professional development, while the 2025 JMIR scoping review of AI in surgical training found repeated use of personalized feedback and adaptive learning trajectories in simulation-heavy setups. Inference: the strongest learning paths are competency-driven, not preference-driven.

3. Contextualized Feedback in Real-Time

Real-time feedback becomes useful when it is tied to the exact action, phase, or mistake the learner is making. In immersive simulations, that means feedback can arrive in the moment of the error rather than only in a post-hoc debrief after the trainee has already rehearsed the wrong behavior.

The 2025 npj Artificial Intelligence crossover trial found that real-time AI-mediated instruction improved performance in simulated surgical skills training, especially after foundational human instruction, and the 2025 JMIR VR emergencies trial showed automated feedback can support self-moderated learning in immersive sessions. Inference: in-scenario feedback is most effective when it clarifies what to change now, not when it floods the learner with commentary.

4. Natural Language Understanding and Interaction

Natural language makes immersive training more flexible because learners can ask questions, request clarification, talk through a scenario, or interact with AI role-players using speech instead of only menus and button prompts. But useful dialogue depends on system state, context, and bounded intent, not just on a generic language model.

The 2025 ACL paper on conversational tutoring in VR training showed that adding game context and state variables improved tutor answer quality, and NVIDIA's 2025 Tokkio documentation describes digital humans built from speech, translation, vision, intelligence, animation, and behavior components. Inference: speech interfaces in training become credible when they are grounded in the live scenario rather than operating as free-floating chat.

5. Predictive Performance Analytics

Predictive analytics in immersive training are most useful when they tell instructors and learners what should happen next. The goal is not only to score the learner after a session, but to forecast where performance is drifting, which subskill is holding progress back, and which practice sequence is likely to pay off.

The 2025 JMIR scoping review found that simulation platforms and box trainers dominate AI-enabled training partly because synchronized video, kinematics, and performance metrics make automated assessment and learning-trajectory modeling feasible. A separate 2025 medical-education paper on AI micro-assessments in simulation-based ultrasound training likewise argues for machine-learning support during practice instead of only end-point testing. Inference: predictive analytics are strongest when they drive targeted remediation and progression decisions inside the simulator.

6. Scenario and Environment Randomization

Scenario randomization matters because people can look competent inside a fixed script while failing when conditions change. AI can vary timing, terrain, distractors, weather, adversary behavior, patient state, equipment issues, or task order so learners build adaptable skill instead of memorizing one path through the exercise.

The Army's 2024 Synthetic Training Environment testing and DARPA's 2024 ACE flight-demonstration milestone both point in the same direction: realistic rehearsal gets stronger when the system can expose trainees to wide variation while still tracking objective performance. Inference: randomization is valuable because it surfaces brittle habits that a single scripted scenario tends to hide.

7. Enhanced Virtual Role-Players and NPC Behavior

AI role-players become useful when they behave like credible participants in the exercise rather than like generic chatbots. In customer-service, leadership, safety, and clinical simulations, that means the virtual character remembers the scenario state, reacts to the learner's choices, and stays within the emotional and procedural boundaries of the training design.

The 2025 ACL study on conversational tutoring in VR found better answer quality when the system used game context and state variables, and the 2026 IWSDS program highlighted VR training tools that combine structured dialogue management with LLM-supported open conversation for sensitive role-play. Inference: strong virtual role-players need state tracking, guardrails, and pedagogical intent, not just fluent language generation.

8. Continuous Skill Assessment

Continuous assessment is one of the clearest strengths of immersive AI systems because it lets instructors see technique unfold across the whole task rather than relying only on the final outcome. That makes it easier to catch hesitation, unsafe sequencing, awkward tool handling, or degraded decision quality before those patterns become ingrained.

The 2025 JMIR surgical-training review and the 2025 Medical Teacher paper on temporal AI micro-assessments both describe the value of fine-grained, time-aware evaluation in simulated settings. Inference: continuous assessment is more useful than simple pass-fail grading because it gives both the learner and the instructor a map of what to fix next.

9. Expert Knowledge Encoding

Immersive systems become far more credible when expert knowledge is encoded into the simulator itself. That can take the form of task models, checklists, acceptable parameter ranges, ideal trajectories, escalation rules, or debrief logic built from domain experts rather than from generic AI outputs.

The 2025 npj Artificial Intelligence crossover trial on simulated surgical skills and the EASA and FAA qualification milestones for VR flight simulators both reinforce the same ground truth: immersive training only scales when validated expert standards are built into the device, task model, and scoring logic. Inference: encoding expertise is what separates a serious simulator from a visually impressive demo.

10. Emotion and Stress Level Detection

Stress and emotion signals can improve training when they are used to guide pacing, support debriefing, or detect overload, but they should not be treated as a simple proxy for competence. Learners can be calm and still wrong, or stressed and still effective. The value lies in combining physiological or behavioral cues with task performance and mental workload context.

The 2025 JMIR randomized trial on medical-emergency VR training explicitly measured stress alongside learning outcomes, and the 2025 Frontiers study in firefighter-style VR environments used pupillometry, workload, and performance measures to examine how demanding conditions shape learning. Inference: emotion and stress detection are most useful as diagnostic context for coaching, not as standalone grading tools.

11. Contextual Cue Generation

Contextual cues are powerful because they can steer attention without taking the task away from the learner. AI can highlight a missed hazard, emphasize an instrument trend, replay a motion segment, or surface a just-in-time prompt based on what phase the learner is in and what has already gone wrong.

The ACL 2025 VR tutoring paper showed the importance of game state for generating relevant answers, and the 2025 Frontiers review of immersive industrial safety training highlighted the growing role of real-time behavioral and biometric analytics in performance-based evaluation. Inference: contextual cueing works best when it is phase-aware, subtle, and aligned with the exact skill being rehearsed.

12. Adaptive Narrative Building

Adaptive narrative matters when the training scenario needs consequences, not just branching dialogue. AI can vary how the scenario unfolds based on the learner's actions, producing better rehearsal of escalation, recovery, communication, and judgment under changing conditions.

The 2026 IWSDS experiential-learning demonstrations used scenario-based VR role-play to let users observe, analyze, and then re-enter a social situation from another perspective, while the 2025 industrial-safety review identified adaptive, AI-driven environments as a growing direction for higher-fidelity evaluation. Inference: adaptive narrative is most useful when it changes consequences and perspective rather than merely decorating the scene with extra text.

13. Realistic AI-Driven Adversaries

In adversarial or competitive settings, fixed opponents quickly become predictable. AI-driven adversaries can create more realistic practice by changing tactics, exploiting learner weaknesses, and forcing the trainee to adapt under pressure instead of following a rehearsed solution.

DARPA's 2024 ACE milestone is the clearest official example because it demonstrated AI-controlled F-16 combat maneuvers against a human-piloted aircraft in within-visual-range scenarios. Inference: adversarial AI in training is valuable not because it is flashy, but because it can continuously surface decision gaps that would otherwise remain hidden in repetitive drills.

14. Scalable Group Training Simulations

Some of the highest-value immersive training is collective rather than individual. AI helps at group scale by synchronizing entities, tracking multiple learners, managing after-action review data, and coordinating complex scenarios that would otherwise demand far more equipment and staff.

Army reporting on the Synthetic Training Environment and the 2025 Synthetic Dragon exercise both emphasize interoperable, networked collective rehearsal rather than isolated single-user simulators. Inference: one of the most operationally credible uses of AI in immersive training is helping many participants rehearse together without losing evaluability.

15. Cultural and Language Adaptations

Language adaptation expands access to training, especially in distributed workforces and multinational environments. AI can support translation, multilingual speech interfaces, and locale-specific phrasing, but strong cultural adaptation still requires domain reviewers who understand what behavior, tone, and scenario assumptions should change for a local audience.

Azure AI Speech now supports real-time multilingual speech translation, and Google Cloud documentation supports multi-language speech recognition workflows for mixed-language input. Inference: these systems materially lower the barrier to multilingual immersive training, but they do not remove the need for local validation of scripts, cues, and cultural expectations.

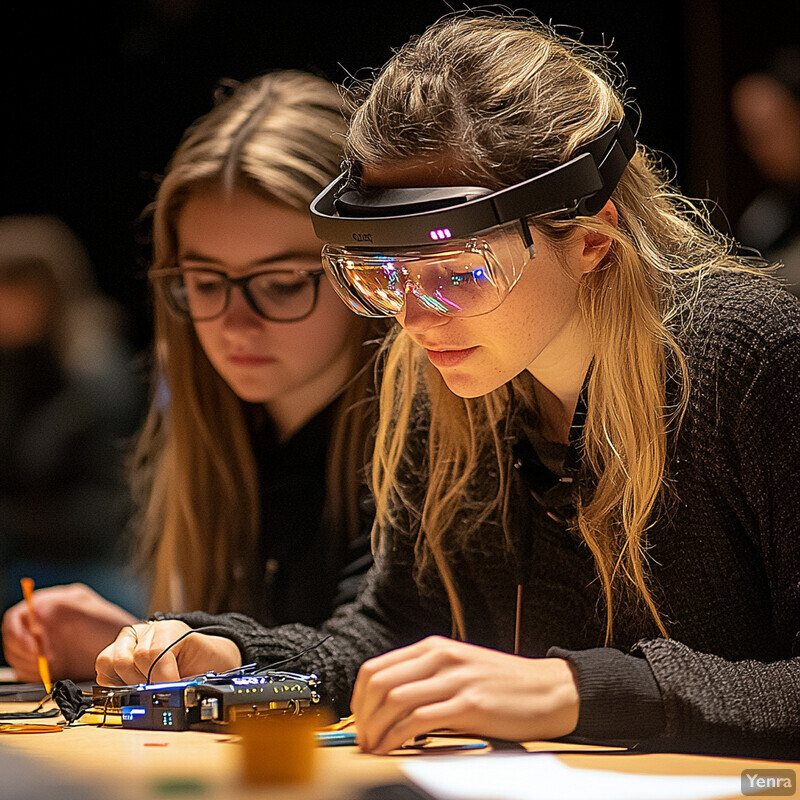

16. Integration with Wearable Tech and Sensors

Wearables strengthen immersive training when they add signal quality the base headset does not provide. That can include motion detail, physiological data, haptic cues, or specialized instrument tracking that supports better coaching, safer practice, or richer interaction with the simulated environment.

The 2025 Discover Sustainability study on WebVR plus wearable-sensor integration found stronger engagement and learning outcomes than video-based instruction, and a 2025 Nature Communications paper demonstrated deep-learning-driven wearable full-body motion tracking with bidirectional haptic feedback for immersive interaction. Inference: sensor integration matters when it adds meaningful control, feedback, or measurement rather than just more gadgets.

17. Learning from Trainee Behaviors

One of AI's biggest training advantages is that it can learn from large volumes of trainee behavior over time. That makes it possible to identify common failure modes, inefficient motion patterns, weak transitions between subskills, or misleading cues in the training design itself.

The surgical-training scoping review and the temporal micro-assessment paper both reflect a field moving toward learning from sequences of trainee actions rather than isolated snapshots. Inference: behavioral mining is strongest when it produces interpretable remediation targets and better scenario design, not merely another opaque score.

18. Resource Optimization

Resource optimization sounds operational rather than educational, but it has direct training consequences. AI helps decide how to render complex scenes efficiently, manage shared devices, reduce instructor burden, and deliver high-fidelity practice to more learners without needing a bespoke hardware stack for every use case.

Apple's June 9, 2025 visionOS 26 announcement added new enterprise and shared-device capabilities for spatial workflows, while NVIDIA continues positioning Omniverse and RTX rendering as infrastructure for high-fidelity real-time simulation. Inference: better rendering and device management are not cosmetic improvements. They are what make immersive training practical for repeated use at organizational scale.

19. Intelligent Tutoring Systems

AI tutors are most valuable when they do what good instructors do between and during repetitions: notice the pattern, explain the mistake, adjust the next step, and keep the learner moving. In immersive settings, that tutor can live inside the scenario, but it still needs bounded authority, clear instructional logic, and instructor visibility.

The 2025 systematic review of intelligent and robot tutoring systems and the ACL 2025 VR tutoring study both point to the same practical conclusion: good tutoring depends on feedback logic, context, and progression control more than on conversational fluency alone. Inference: the best immersive tutors are closer to stateful coaches than to unconstrained copilots.

20. Multimodal Interaction and Feedback

Immersive skill training is strongest when vision, audio, touch, motion, and language reinforce the same lesson. AI helps fuse those channels so the learner can speak, move, touch, and perceive consequences in ways that feel coherent instead of fragmented across separate subsystems.

The 2025 Nature Communications paper on wearable motion-and-haptics networks and the 2025 Scientific Reports work on haptic guidance for motor accuracy both show how AI-supported feedback loops can improve real-time practice by combining sensing with tactile instruction. Inference: multimodal feedback is most effective when it reduces ambiguity about what to do next without overwhelming the learner.

Sources and 2026 References

- Smart Learning Environments: A systematic review of intelligent and robot tutoring systems is the main tutoring-systems synthesis anchor.

- JMIR: Knowledge Gain and the Impact of Stress in a Fully Immersive Virtual Reality-Based Medical Emergencies Training With Automated Feedback grounds automated feedback, retention, and stress framing.

- JMIR: Transforming Surgical Training With AI Techniques for Training, Assessment, and Evaluation is the core review source for simulation-heavy assessment and learning analytics.

- Frontiers: Using virtual reality for teacher education: a systematic review and meta-analysis supports adaptive and personalized learning claims.

- npj Artificial Intelligence: Combining real-time AI and in-person expert instruction in simulated surgical skills training grounds real-time instructional support and expert-knowledge encoding.

- ACL Anthology: Conversational Tutoring in VR Training: The Role of Game Context and State Variables is the main source for grounded in-scenario dialogue.

- ACL Anthology: IWSDS 2026 supports current VR role-play and experiential-learning dialogue demonstrations.

- Medical Teacher via PubMed: Enabling micro-assessments of skills in the simulated setting using temporal artificial intelligence-models grounds continuous assessment and behavioral micro-feedback.

- U.S. Army: Soldiers test new synthetic training environment supports large-scale and randomized collective rehearsal.

- DVIDS: Next Generation Constructive Team showcases advanced capabilities at Synthetic Dragon 2025 grounds interoperable collective simulation infrastructure.

- DARPA: ACE Program Achieves World First for AI in Aerospace is the clearest official adversarial-simulation anchor.

- EASA approves the first VR-based flight simulation training device grounds regulatory acceptance of serious immersive training.

- Loft Dynamics becomes world's first VR flight simulation training device to receive FAA qualification adds the U.S. qualification milestone.

- Frontiers: Pupillometry and workload analysis in VR-based firefighter training grounds stress and workload interpretation.

- Frontiers: Immersive technologies for evaluating industrial safety training in high-risk environments is the main evaluation-framework review for biometric and behavioral assessment.

- Discover Sustainability: Enhancing engineering student engagement and learning outcomes through WebVR and wearable sensor integration with immersive learning supports wearable-enhanced immersive instruction.

- Nature Communications: Wearable interactive full-body motion tracking and haptic feedback network systems with deep learning grounds real-time multimodal sensing and haptics.

- Scientific Reports: Real-time motion force-feedback system with predictive-vision for improving motor accuracy supports AI-guided haptic feedback.

- Apple: visionOS 26 introduces powerful new spatial experiences for Apple Vision Pro grounds current spatial-computing device management and training-environment access.

- Microsoft Learn: Speech translation overview supports multilingual speech-translation claims.

- Google Cloud: Multiple language recognition supports mixed-language speech-input handling.

- NVIDIA Omniverse and RTX Renderer overview ground current high-fidelity simulation infrastructure.

Related Yenra Articles

- Virtual Reality Training covers the broader enterprise and operational context for immersive learning systems.

- Workload Detection in Human Factors Engineering adds the human-performance and workload-measurement layer that often feeds adaptive training.

- Designing Interactive Experiences extends the orchestration, adaptive-interface, and multimodal design side of immersive systems.

- Clinical Decision Support Systems is useful where immersive medical training overlaps with evidence-driven coaching and bounded AI assistance.