Image recognition in 2026 is no longer just a question of whether a model can say what is in a picture. The category now spans image classification, object detection, tracking, segmentation, visual search, face verification, and multimodal systems that can answer questions about an image instead of only assigning labels.

That makes the field broader than the older benchmark story. Strong image-recognition systems now sit inside a larger computer vision stack that includes fast inference, region-level understanding, embeddings, retrieval, human review, and application-specific controls. The most useful products are not the ones that merely score well on a lab dataset. They are the ones that stay reliable across messy lighting, motion, scale, partial occlusion, and real operational workflows.

This update reflects the category as of March 15, 2026. It focuses on the parts of modern image recognition that are clearly shaping products and deployments now: mature classification, real-time detection, multimodal scene understanding, face verification, domain-specific pattern recognition, tracking, region-level interpretation, enhancement for degraded inputs, automated tagging, and assisted perception. Inference: the center of gravity has shifted from "Can AI recognize images at all?" to "How well can it do so under real constraints, at useful speed, and inside a workflow people trust?"

1. Mature Accuracy, Wider Deployment

Raw recognition accuracy is no longer the whole story. Many core image-classification tasks are mature enough that the biggest practical differences now come from robustness, calibration, latency, and deployment fit. That is why current image-recognition progress feels less like dramatic new benchmark leaps and more like broader operational use across products and industries.

Stanford HAI's AI Index 2025 technical-performance chapter reflects a broader frontier pattern: classic vision benchmarks are no longer where the most meaningful differentiation happens, while commercial tools such as Google Cloud Vision make high-quality label detection broadly accessible. Inference: image classification is mature enough that practical value now depends more on deployment quality than on another tiny benchmark win.

2. Real-Time Object Detection

Real-time recognition has become an engineering expectation in many settings, not just a research milestone. Cameras on vehicles, robots, phones, retail systems, and industrial lines increasingly need models that can locate and classify objects quickly enough to support live decisions. That is where object detection has become one of the most operationally important parts of image recognition.

YOLOv10 was designed as a real-time end-to-end detector that reduces post-processing overhead, while Google Cloud's object-localizer documentation shows how location-aware recognition has become a normal product capability rather than a specialist demo. Inference: by 2026, fast detection is increasingly a baseline requirement for production vision systems.

3. Contextual Multimodal Understanding

Image recognition is increasingly paired with language reasoning. Modern systems do not only identify objects. They summarize scenes, answer questions about what is happening, compare images against text prompts, and help people search visually without needing the perfect keywords. That makes recognition feel more conversational and more useful to non-specialists.

Google's AI Mode multimodal search experience and its Gemini 3 Search rollout both point to the same change: image understanding is being fused with broader reasoning and retrieval rather than treated as a separate silo. Inference: one of the clearest 2026 advances is that image recognition increasingly serves as the visual front end to a larger multimodal assistant.

4. Facial Recognition and Verification

Facial recognition remains one of the strongest but most sensitive branches of image recognition. On the technical side, modern systems can produce highly stable face embeddings for verification and matching under many conditions. On the operational side, the category still demands careful controls because context, image quality, and use-case boundaries matter enormously.

NIST's ongoing FRTE 1:1 evaluation shows that leading face-verification systems can achieve extremely low error rates under controlled testing, but it also reinforces a durable lesson: performance changes with demographics, capture conditions, and thresholds. Inference: face recognition in 2026 is technically mature, yet still highly dependent on disciplined deployment.

5. Specialized Pattern Recognition

Some of the most important image-recognition gains now come from specialist domains rather than generic photo apps. Medicine, science, manufacturing, and other expert settings care less about naming everyday objects and more about identifying subtle patterns that carry high cost if missed. In those settings, the value of recognition often comes from sensitivity, consistency, and prioritization.

Google Research's multimodal medical AI work and the MedGemma developer foundations both show how frontier vision systems are being adapted to expert image interpretation tasks rather than only consumer photo recognition. Inference: the most meaningful image-recognition deployments increasingly happen where visual patterns are subtle, high-volume, and worth expert attention.

6. Tracking and Video Understanding

Image recognition is increasingly temporal, not just frame-by-frame. Many valuable systems need to follow objects, people, tools, or regions across time while keeping identity and context intact. That is why tracking, video segmentation, and short-horizon reasoning are becoming more central to practical vision stacks.

Segment Anything 2 extends promptable segmentation into video, and Google's October 2024 Lens updates showed a consumer-facing version of the same trajectory by letting users ask questions about video. Inference: one of the clearest current shifts is that recognition systems are increasingly expected to preserve context across time, not just identify what is visible in a single frame.

7. Region-Level Understanding

Recognition is moving from whole-image labels toward region-level understanding. In practice, people increasingly need to know where something is, which part of the scene matters, and what boundaries define the object or area of interest. That is why classification, detection, and segmentation are converging in many modern tools.

Google Cloud Vision's label detection and object localization features, together with Segment Anything 2, show how mainstream systems now mix image-wide recognition with location-aware and mask-aware interpretation. Inference: by 2026, strong image recognition is increasingly about spatial precision, not just category prediction.

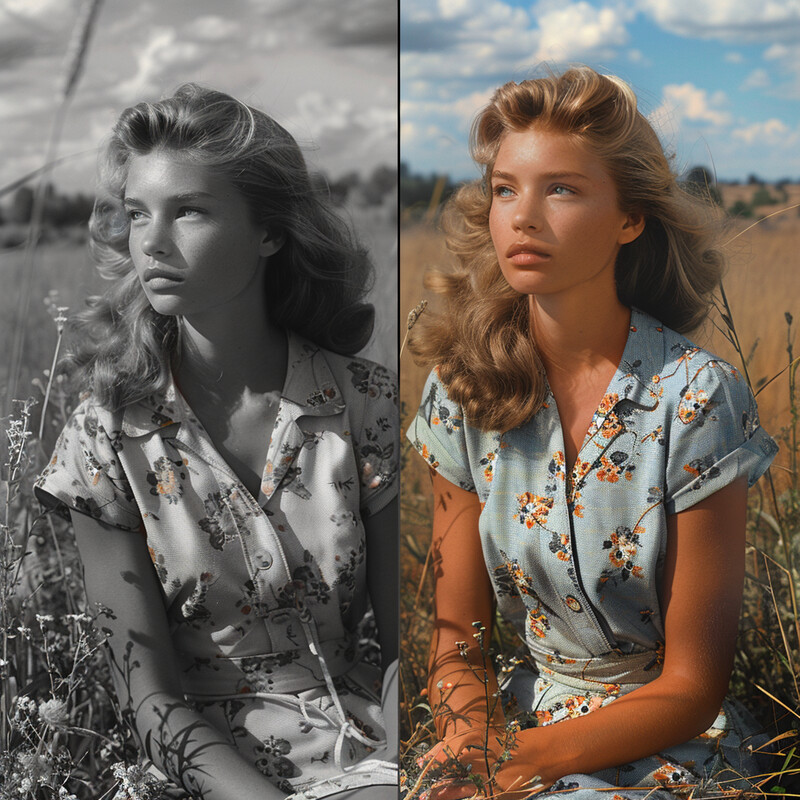

8. Enhancement for Degraded Inputs

Recognition quality still depends heavily on image quality. Low light, blur, noise, damage, compression artifacts, and incomplete content can all make a strong model look weak. That is why enhancement and restoration increasingly act as companion steps around recognition, especially in archives, consumer photo repair, and messy operational data.

Adobe's January 27, 2026 Photoshop update emphasizes more control, realism, and precision in AI-assisted image editing and repair workflows. Inference: one practical 2026 change is that restoration is increasingly intertwined with recognition, because people expect systems to work on damaged, incomplete, or low-quality images instead of only pristine inputs.

9. Automated Tagging, Visual Search, and Retrieval

Many organizations do not need a vision model merely to classify a picture once. They need to tag millions of images, retrieve similar ones, connect a photo to products or entities, and make visual collections searchable. That is where image recognition increasingly overlaps with visual search, embeddings, and large-scale content routing.

Google Cloud Vision's label and web-detection tools, along with Google's broader visual-search work, show that production vision is increasingly built around tagging, similarity, and retrieval rather than single-shot classification alone. Inference: one of the biggest shifts is that image recognition now helps people find and organize images, not merely name them.

10. AR and Assisted Perception

One of the most visible user-facing effects of image recognition is assisted perception. AR systems, camera-based helpers, and spatial interfaces all rely on recognition to identify surfaces, track images, anchor digital objects, and respond to what the user is looking at. In that sense, image recognition is increasingly becoming a real-time interface layer.

Apple's ARKit and visionOS image-tracking documentation make clear that modern spatial experiences depend on reliable visual recognition and tracking, while Google's visual-search work shows the same pattern on the search side. Inference: the most mainstream expression of image recognition may be systems that help people act in the moment, not just analyze images after the fact.

Sources and 2026 References

- Stanford HAI: AI Index Report 2025, Technical Performance.

- Google Cloud: Detect labels on images.

- arXiv: YOLOv10: Real-Time End-to-End Object Detection.

- Google Cloud: Localize objects in images.

- Google: AI Mode gets more visual with multimodal search.

- Google: Gemini 3 expands Search AI Mode.

- NIST: FRTE 1:1 Verification.

- Google Research: Multimodal medical AI.

- Google for Developers: MedGemma model card.

- arXiv: Segment Anything in Images and Videos.

- Google: Lens October 2024 updates.

- Adobe: New Photoshop innovations provide more control, realism, and precision.

- Google Cloud: Detect web entities and pages.

- Google: New advances in visual search.

- Apple: ARKit.

- Apple: Tracking images in 3D space.

Related Yenra Articles

- Computer Vision in Retail shows how recognition systems become operational when they are tied to stores, shelves, queues, and merchandising.

- Document Digitization covers a neighboring vision stack where OCR, layout analysis, and extraction matter more than generic photo recognition.

- Content-Based Image Retrieval extends the tagging and retrieval side of image recognition into search across large visual collections.

- Autonomous Vehicles shows what happens when recognition must run live, with low latency and high consequence.