Augmented reality is strongest in 2026 when it stops behaving like a novelty overlay and starts acting like a useful spatial interface. The real progress is in spatial computing, computer vision, multimodal context, natural input, translation, navigation, training, and accessibility layers that stay aligned to the physical world.

That matters because weak AR still feels like labels floating in front of your face. Strong AR understands surfaces, remembers locations, reacts to hands, eyes, and voice, and delivers information only when it helps. The difference is not just better graphics. It is better perception, better timing, and better workflow fit.

This update reflects the category as of March 22, 2026. It focuses on the parts of AR that feel most credible now: persistent anchoring, multimodal scene understanding, hand-eye-voice input, live translation, head-up navigation, industrial guidance, skill transfer, medical visualization, low-vision assistance, and the rise of lightweight AI-glasses experiences.

1. Spatial Computing and Persistent Anchoring

The biggest step forward in AR is that digital content is increasingly treated like something that belongs in a place, not just something that floats on top of a camera feed.

Official platform guidance now reflects this shift clearly. Apple says visionOS 26 can align apps and Quick Look content to physical surfaces, lock them in place, and restore them after restart, while ARKit adds shared world anchors for precise shared placement in the room. Google's current Android XR OpenXR guidance similarly exposes plane detection, anchors, and anchor persistence so content can remain positioned against real surfaces across sessions. Inference: the strong 2026 direction in AR is durable spatial alignment, not one-off overlays.

2. Scene Understanding and Multimodal Search

AR becomes much more useful when it can understand what the user is looking at, what they are asking, and what part of the scene actually matters.

Google reported in February 2025 that Lens is already used for more than 20 billion visual searches every month, and that AI Overviews are expanding Lens's ability to explain more novel or unique images without requiring a carefully worded query. At I/O 2025, Google also showed Android XR glasses paired with Gemini using cameras and microphones to understand what the wearer sees and hears, then surface information through audio and an optional in-lens display. Inference: AR is getting stronger when visual search, scene understanding, and conversational assistance converge into one multimodal layer.

3. Hands, Eyes, and Voice as the Default Interface

The AR interface is increasingly becoming multimodal by default, with hands, eyes, and voice working together instead of forcing users back into phone-era menu patterns.

Android XR's current OpenXR and Unity guidance lists hand tracking, eye gaze interaction, gesture-based hand interaction such as pinching, swiping, and pointing, and eye-tracked foveation among the supported capabilities, while noting that hand input is the default interaction pattern. Apple's current visionOS guidance likewise says fully immersive apps can use hand tracking up to 90 Hz with no additional code. Android XR's Gemini guidance also frames hands, gaze, and voice as the natural inputs for the system. Inference: strong AR design in 2026 assumes multimodal input from the start instead of bolting it on later.

4. Live Translation and Caption Layers

Live language support is becoming one of the clearest everyday uses for AR because it turns the world itself into a translated, captioned interface.

Google announced in August 2025 that Translate can now support back-and-forth live conversations in more than 70 languages, and said people already translate around 1 trillion words each month across Translate, Search, Lens, and Circle to Search. Meta said on April 23, 2025 that live translation on Ray-Ban Meta glasses was rolling out broadly across its markets for English, French, Italian, and Spanish, alongside broader visual-question support. Inference: translation is moving from a separate app action into a live ambient layer that fits naturally inside AR and AI-glasses experiences.

5. Navigation and Wayfinding with More Head-Up Time

AR navigation is strongest when it keeps attention on the environment instead of constantly asking users to look down at a separate screen.

A 2024 Applied Ergonomics study of maritime navigation found that AR reduced head-down time by a factor of 2.67 and reduced head-down occurrences by 62% compared with non-AR scenarios, while not significantly changing mean situation awareness scores. That is an important result because it suggests AR's most reliable gain is often attentional: it keeps operators looking at the environment longer without forcing them to bounce between instruments and the outside world. Inference: the practical promise of AR wayfinding is better head-up behavior first, with smarter route assistance layered on top.

6. Industrial Guidance and Remote Expert Support

AR is most credible in industry when it attaches instructions, checks, and expert guidance to the actual equipment and step the worker is handling.

A 2024 study comparing head-mounted and handheld AR for guided assembly found that both display types could successfully support assembly work, with the head-mounted setup showing slightly better descriptive results in time and committed failures. A separate user study in plastics manufacturing found that workers could successfully tailor an AR-based remote assistance system to distinct real-world scenarios including troubleshooting a running injection molding machine and tool maintenance. Inference: the strongest industrial AR systems are not one-size-fits-all overlays; they are configurable workflow tools that fit the equipment, the task, and the worker's current problem.

7. Training and Skill Transfer

One of the most evidence-backed uses of AR is guided learning, especially when AI helps adapt difficulty, feedback, and practice to the learner.

A 2025 systematic review and meta-analysis of mixed reality in vocational education and training synthesized 53 studies and found significant positive effects on behavioral outcomes (d = 0.40), cognitive outcomes (d = 0.84), and affective outcomes (d = 0.65) compared with control groups. A 2026 systematic review of AR-enhanced instructional approaches then found that inquiry-based and collaborative AR designs improve engagement, understanding, and academic performance across the 26 peer-reviewed studies it analyzed. Inference: AR training is strongest when it is embedded in pedagogy and measurable practice rather than treated as a flashy visualization layer.

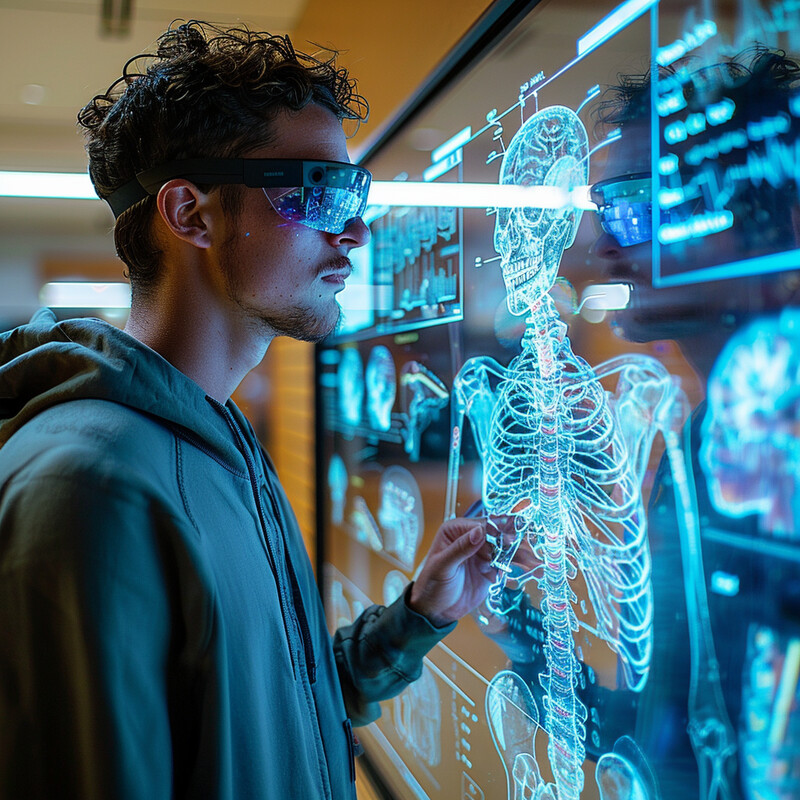

8. Medical Visualization and Procedure Guidance

AR has become especially compelling in medicine when it improves spatial guidance and visualization without pretending to automate the clinician out of the loop.

A randomized multicenter trial in spinal surgery covering 150 patients found that excellent-or-good pedicle screw placement reached 98.0% in the AR-guided group versus 91.7% in the control group, with superiority confirmed statistically. A 2024 orthognathic surgery study likewise found that a small-workspace infrared tracking camera AR approach was on par with conventional drilling-guide accuracy and outperformed ArUco marker-based navigation. Inference: some of the strongest AR evidence in 2026 comes from high-value visualization and navigation tasks where spatial precision matters directly to outcomes.

9. Assistive Vision and Accessibility Layers

AR is increasingly useful as an assistive technology because it can expand fields of view, highlight hazards, and make real-world tasks more navigable for people with low vision.

A 2025 study of the Retiplus AR low-vision aid in 13 patients with retinitis pigmentosa found significant visual-field expansion in all four quadrants, with greater horizontal enlargement (21.38 degrees plus or minus 12.94) than vertical (15.00 degrees plus or minus 10.08), and participants reported improved mobility and daily activity performance in low-light settings. Earlier clinical work also found median distance visual acuity improving from 0.9 logMAR to 0.2 logMAR and median near visual acuity from 0.4 logMAR to 0.1 logMAR with AR assistance. Inference: assistive AR is one of the clearest examples of augmentation in the literal sense, where the system makes the world more usable rather than merely more decorated.

10. AI Glasses and Everyday Utility

The category is shifting from occasional phone-based AR moments toward lightweight, always-available glasses experiences that add help in small, useful bursts.

Google's Android XR guidance now explicitly separates immersive experiences from augmented experiences on AI glasses, and says these lightweight systems should help users in everyday life on the go, at home, or at work. Google's I/O 2025 demo said Gemini-powered glasses can see and hear the world, while Android XR's Gemini guidance emphasizes voice as part of a hands-gaze-voice input stack. Snap's Q3 2025 results show that AR is already operating at consumer scale: more than 350 million Snapchatters engaged with AR every day on average, AR Lenses were used 8 billion times per day, and more than 500 million users had engaged with GenAI Lenses over 6 billion times. Inference: the strongest 2026 AR story is not a single killer headset. It is the steady normalization of lightweight, AI-assisted, context-aware augmentation across apps, glasses, and camera platforms.

Related AI Glossary

- Spatial Computing explains the broader shift from flat app interfaces toward software that understands rooms, surfaces, and spatial context.

- Extended Reality (XR) covers the umbrella category that includes augmented, mixed, and virtual reality experiences.

- Computer Vision covers the perception layer that lets AR systems detect objects, segment scenes, and understand the camera view.

- Gesture Recognition explains how touchless hand and body input becomes part of a usable AR interface.

- Gaze Tracking covers the eye-driven interaction patterns that increasingly shape hands-free spatial interfaces.

- SLAM explains how systems localize themselves while building or updating a map of the surrounding space.

- Multimodal Learning covers the AI approach that joins text, images, audio, and sensor inputs into one reasoning system.

Sources and 2026 References

- Apple Developer: What's New in visionOS.

- Android Developers: Android XR.

- Android Developers: Develop with OpenXR for Android XR.

- Android Developers: Develop with Unity for Android XR.

- Android Developers: Enhance your Android XR app with AI using Gemini.

- Google: Android XR glasses demo from I/O 2025.

- Google: Lens updates and AI Overviews.

- Google: AI-powered live translation in Google Translate.

- Meta: Meta AI and live translation rollout on Ray-Ban Meta glasses.

- Snap: Q3 2025 Financial Results.

- PubMed: The effects of Augmented Reality on operator Situation Awareness and Head-Down Time.

- Springer: Comparing head-mounted and handheld augmented reality for guided assembly.

- ScienceDirect: Supporting tailorability in augmented reality based remote assistance in the manufacturing industry.

- Springer: Mixed reality in vocational education and training meta-analysis.

- PubMed: Systematic review of AR-enhanced instructional approaches.

- PubMed: AR navigation improves pedicle screw placement accuracy.

- PubMed: Augmented reality navigation in orthognathic surgery.

- Photonics: AR visual aid for peripheral visual field loss.

- PubMed: AR technology for improving visual acuity in low vision.

Related Yenra Articles

- Language Translation Services extends the live multilingual layer that increasingly fits naturally inside AR and AI-glasses workflows.

- Virtual Reality Training covers the immersive end of the training stack that often complements AR guidance and rehearsal.

- Adaptive User Interfaces explores the gaze, context, and attention-aware interaction patterns that also shape strong AR systems.

- Designing Interactive Experiences shows how spatial and multimodal interaction becomes more useful when the experience design is intentional.

- Autonomous Surgical Robots extends the medical-guidance story into surgeon-in-the-loop robotics, imaging, and procedure support.