AI is strongest in localization and geopolitical analysis when it is used as a multilingual evidence pipeline rather than as a magic system that somehow "understands the world" on its own. In 2026, the most credible stacks combine machine translation, geoparsing, cross-lingual information retrieval, named entity recognition, text summarization, GIS, and sentiment analysis to find local-language reporting, geolocate it, connect it to actors and places, and surface what deserves expert review first.

That still leaves major limits. Local media can be censored or noisy, translations can flatten cultural nuance, event pipelines can confuse rumor with fact, and forecasting systems can become dangerously persuasive if uncertainty is hidden. The best deployments therefore stay inspectable: they preserve source text, expose provenance, score uncertainty, and keep regional expertise in the loop rather than replacing it.

This update reflects the field as of March 20, 2026. It focuses on the parts of the category that feel most operational now: multilingual translation, culture-aware adaptation, geoparsing, map-linked media analysis, always-on monitoring, conflict and political-risk forecasting, multilingual extraction, automated mapping, local-language summarization, cross-lingual retrieval, localized analyst interfaces, event extraction, disinformation tracking, early-warning systems, scenario testing, and language-specific domain adaptation.

1. AI-Powered Machine Translation

Machine translation is strongest as a first-pass access layer for local-language material, not as a final adjudicator of meaning. In geopolitical work, the practical win is speed: analysts can screen far more local reporting, official statements, and community posts before deciding what requires expert translation or regional review.

Meta's NLLB work showed that massively multilingual translation can scale to 200 languages, materially improving access for many lower-resource pairs, while recent low-resource domain-adaptation research shows that general multilingual skill still does not solve domain drift by itself. Inference: translation is now strong enough to open far more local reporting to analysis, but the most reliable pipelines still adapt models to region, domain, and terminology rather than trusting generic translation everywhere.

2. Cultural Nuance Adaptation

Localization gets stronger when systems are trained to respect cultural norms, social assumptions, and region-specific framing instead of only translating literal wording. The hard part is not vocabulary. It is knowing when a phrase carries social meaning, insult, irony, reverence, or political positioning that does not survive direct translation cleanly.

ACL 2025 work on cultural learning-based adaptation shows that language models can be tuned toward better cultural-value alignment, while MT Summit 2025 findings on culture-specific items still show persistent failure modes around proper names, social references, and culture-bound concepts. Inference: the field is improving, but culture-aware localization remains an adaptation problem, not a checkbox you get for free from using a multilingual model.

3. Geo-Referenced Content Analysis

Geo-referenced analysis becomes much more useful when language pipelines can connect place mentions in text to actual coordinates, boundaries, and map layers. In practice, that means turning "a protest near the provincial capital" or "shelling outside a refinery" into something that can be searched, compared, and mapped against other evidence.

The 2024 WHLL paper shows that large-scale geoparsing corpora can now be built from Wikipedia hyperlinks at a scale of 1.3 million articles, while GDELT's geocoding infrastructure reflects an operational push to resolve locations across multilingual global news in near real time. Inference: geoparsing is shifting from a niche NLP subtask into a practical backbone for map-linked monitoring and regional triage.

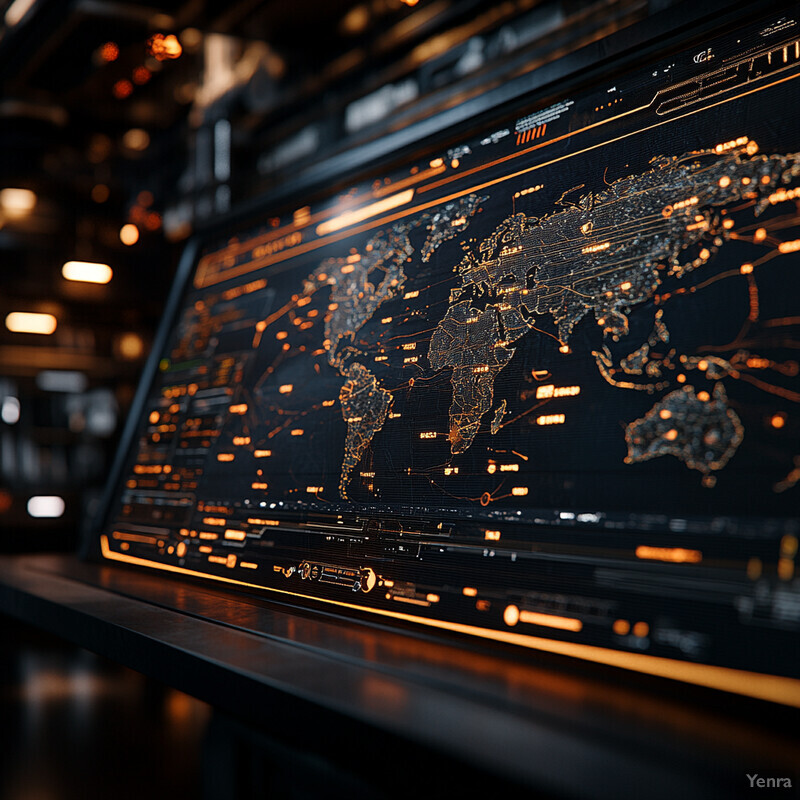

4. Real-Time Media Monitoring

Real-time monitoring is strongest when AI is used to triage multilingual media volume into story clusters, regional anomalies, entity shifts, and narrative changes that analysts can verify quickly. The gain is not infinite awareness. It is faster surfacing of what changed, where, and in which language ecosystem.

GDELT remains one of the clearest examples of at-scale multilingual monitoring, tracking global media in more than 100 languages with frequent updates, while SemEval 2025's multilingual narrative-extraction task shows how monitoring is evolving beyond keyword alerting toward role, framing, and manipulation analysis. Inference: the strongest monitoring systems now combine ingestion scale with narrative-aware classification instead of relying on sentiment spikes or keyword counts alone.

5. Political Risk Forecasting

Political-risk forecasting gets stronger when models expose uncertainty and are treated as decision support rather than prediction theater. The credible use case is not “AI knows the next coup.” It is scoring where violence, instability, or electoral stress appears more likely so analysts can focus attention and contingency planning sooner.

VIEWS now runs openly as an AI-powered early-warning platform that emphasizes probabilistic forecasting and uncertainty, while ACLED's CAST provides monthly country-level forecasts of political violence events up to six months ahead. Inference: political-risk AI is getting stronger where it is framed as a transparent, rolling warning layer with measurable forecast windows, not as deterministic geopolitical prophecy.

6. Enhanced Named Entity Recognition (NER)

Multilingual NER is one of the most important quiet upgrades in geopolitical analysis because so much of the workflow depends on catching actors, organizations, parties, militias, locations, and aliases correctly. Better entity extraction means better search, better graph construction, and better tracking of emerging actors across languages.

Work on low-resource NER shows that transfer from related language families can materially improve recognition quality, and mCL-NER demonstrates that contrastive alignment across 40 languages can push cross-lingual NER performance higher on XTREME-style benchmarks. Inference: entity extraction is becoming more robust, but it still benefits most from region-aware adaptation where local aliases, transliterations, and emergent organizations change quickly.

7. Automated Geopolitical Mapping

Automated mapping gets stronger when text-derived signals are fused with spatial layers such as buildings, roads, infrastructure, and population distributions. The strongest systems do not stop at telling you that something happened. They help show what physical areas, assets, and communities may be affected.

A 2025 Bangkok case study shows how open geospatial data, remote sensing, and machine learning can support building-level population estimation in data-limited settings, while Communications Earth & Environment 2025 demonstrates scalable conflict-damage mapping from open Sentinel-1 time series in Ukraine. Inference: geopolitical mapping is getting stronger because open geodata and satellite AI are reducing the gap between media signals and map-ready operational layers.

8. Local Language Summarization

Summarization is strongest when it compresses multilingual evidence without flattening disagreement, hedging, or region-specific framing. In geopolitical work, the hard part is not making a summary shorter. It is deciding which claims, sources, and uncertainties must survive compression.

GlobeSumm highlights how difficult it remains to unify multilingual, cross-lingual, and multi-document news summarization in one benchmark, while NAACL 2025 introduces a mixed-language multi-document news dataset that better reflects how international events are actually covered across languages. Inference: summarization is becoming more useful for geopolitical analysis because the research community is finally benchmarking the mixed-language news clusters that analysts already work with every day.

9. Cross-Lingual Information Retrieval

Cross-lingual information retrieval is one of the clearest leverage points in the stack because analysts rarely know in advance which language will hold the most useful evidence. The strongest retrieval systems increasingly work without forcing full manual translation of every document first.

CLIRudit 2025 shows that large dense retrievers can achieve zero-shot cross-lingual retrieval performance comparable to translated-query baselines in academic search, while COLING 2025 work on cross-dialect retrieval shows how quickly performance degrades when local knowledge lives in highly variable, low-resource dialects. Inference: cross-lingual retrieval is genuinely useful now, but dialect and script variation remain real bottlenecks exactly where local intelligence is most valuable.

10. Adaptive User Interfaces

Adaptive interfaces are strongest when they localize evidence presentation itself: script direction, language tagging, transliteration choices, evidence panes, map layers, and role-based views for different analysts. In other words, the interface should help users work across languages and evidence types without forcing a one-size-fits-all dashboard.

W3C's current internationalization best-practices guidance makes clear that language metadata, bidi handling, and locale-safe presentation are foundational requirements for multilingual interfaces, while AdaptUI 2024 shows how context-aware UI generation can be structured around user and environmental context. Inference: AI analyst interfaces become genuinely stronger only after the localization plumbing is right; otherwise, personalization just adds polish on top of multilingual failure modes.

11. Event Extraction and Classification

Event extraction is getting stronger because models are learning to handle not just who and where, but what happened, when, and with what evidence. For geopolitical work, that matters because early warning and trend analysis depend on structured event streams rather than raw news piles.

LEMONADE brings conflict event extraction closer to real-world coverage with nearly 40,000 events across 20 languages and 171 countries, while CASE 2023 shows that battle-event detection and geocoding from war-related Telegram messages can be evaluated against ACLED-style incident distributions. Inference: multilingual event extraction is no longer just an English benchmark problem; it is becoming a practical layer for global conflict and protest monitoring.

12. Disinformation Detection and Localization

Disinformation analysis is strongest when it combines multilingual claim retrieval, narrative classification, source triage, and local context instead of looking only for generic “fake news” cues. The real task is finding which claims, languages, and communities are being targeted, not just labeling content as suspicious.

The VIGILANT project reflects an operational push toward multilingual disinformation tooling for law-enforcement and investigative contexts, while SemEval 2025 Task 7 formalizes multilingual and cross-lingual fact-checked claim retrieval as a core benchmark problem. Inference: disinformation detection is maturing from standalone classifiers toward retrieval-heavy systems that map claims across languages, fact-check archives, and local narrative ecosystems.

13. Early Warning Systems for Crises

Early-warning systems get stronger when AI is used to connect hazards, exposure, vulnerability, and communication pathways instead of forecasting only one isolated variable. In geopolitical and humanitarian settings, that means tying weather, displacement, food stress, conflict, and institutional response into the same warning chain.

Nature Communications 2025 argues for integrated AI that links hazard, impact, and communication layers in complex climate-risk warnings, while WMO's Early Warnings for All initiative provides the official global push to expand multi-hazard warning coverage. Inference: the strongest early-warning systems are becoming modular and multi-hazard, with AI serving as connective tissue across forecasting, local context, and risk communication rather than as a monolithic black box.

14. Policy Simulation and Scenario Testing

Policy simulation is useful when it stays clearly in the realm of scenario exploration rather than pretending to be an oracle. The strongest AI use here is to accelerate what-if analysis, expose assumptions, and help analysts compare pathways before decisions are made.

PolicySimEval 2025 is notable precisely because current systems still perform poorly on realistic policy-simulation tasks, and NAACL 2025 work on simulating survey-response distributions shows some progress but still highlights difficulty on unseen questions and generalization. Inference: this area is promising but early; AI can help structure and stress-test scenarios, yet current systems remain far from autonomous policy evaluation.

15. Language-Specific Domain Adaptation

Language-specific domain adaptation is one of the most practical ways to improve geopolitical AI because the task usually fails at the intersection of both language and topic. A model can be broadly multilingual and still do poorly on local political slang, party names, military terminology, or region-specific administrative language.

Recent empirical work on language adaptation for LLaMA2 lays out continued pre-training, vocabulary expansion, and instruction tuning as practical levers for low-resource languages, while findings on cross-lingual domain adaptation in Japanese medicine and Nepali continued learning show that targeted adaptation can materially improve under-served language performance. Inference: geopolitical AI stacks get stronger when teams adapt models to specific language-domain combinations instead of relying on one general multilingual checkpoint for every region and topic.

Related AI Glossary

Helpful terms for this page include Machine Translation, Geoparsing, Geographic Information System (GIS), Named Entity Recognition (NER), Entity Extraction and Linking, Cross-Lingual Information Retrieval, Text Summarization, Semantic Search, Knowledge Graph, Sentiment Analysis, and Retrieval Augmented Generation (RAG).

Sources and 2026 References

- Nature (2024): Scaling neural machine translation to 200 languages

- COLING 2025: From Priest to Doctor: Domain Adaptation for Low-Resource Neural Machine Translation

- ACL 2025: Cultural Learning-Based Culture Adaptation of Language Models

- Machine Translation Summit 2025: The Challenge of Translating Culture-Specific Items: Evaluating MT and LLMs Compared to Human Translators

- LREC-COLING 2024: Automatic Construction of a Large-Scale Corpus for Geoparsing Using Wikipedia Hyperlinks

- The GDELT Project

- GDELT Project: GDELT 2.0 - Our Global World in Realtime

- VIEWS: Prediction Challenge 2023/2024

- ACLED: CAST endpoint documentation

- ACL 2023 Workshop on Balto-Slavic NLP: Named Entity Recognition for Low-Resource Languages - Profiting from Language Families

- mCL-NER: Cross-Lingual Named Entity Recognition via Multi-view Contrastive Learning

- Remote Sensing (2025): Towards High-Resolution Population Mapping: Leveraging Open Data, Remote Sensing, and AI for Geospatial Analysis in Developing Country Cities - A Case Study of Bangkok

- Communications Earth & Environment (2025): An open-source tool for mapping war destruction at scale in Ukraine using Sentinel-1 time series

- EMNLP 2024: GlobeSumm: A Challenging Benchmark Towards Unifying Multi-lingual, Cross-lingual and Multi-document News Summarization

- NAACL 2025: A Mixed-Language Multi-Document News Summarization Dataset and a Graphs-Based Extract-Generate Model

- MRL 2025: CLIRudit: Cross-Lingual Information Retrieval of Scientific Documents

- COLING 2025: Cross-Dialect Information Retrieval: Information Access in Low-Resource and High-Variance Languages

- W3C (2025): Internationalization Best Practices for Spec Developers

- User Modeling and User-Adapted Interaction (2024): AdaptUI: A Framework for the development of Adaptive User Interfaces in Smart Product-Service Systems

- Findings of ACL 2025: LEMONADE: A Large Multilingual Expert-Annotated Abstractive Event Dataset for the Real World

- CASE 2023: Detecting and Geocoding Battle Events from Social Media Messages on the Russo-Ukrainian War

- EAMT 2024: Multilinguality in the VIGILANT project

- SemEval 2025 Task 7: Multilingual and Crosslingual Fact-Checked Claim Retrieval

- SemEval 2025 Task 10: Multilingual Characterization and Extraction of Narratives from Online News

- Nature Communications (2025): Early warning of complex climate risk with integrated artificial intelligence

- WMO: Early Warnings for All

- PolicySimEval (2025): A Benchmark for Evaluating Policy Outcomes through Agent-Based Simulation

- NAACL 2025: Specializing Large Language Models to Simulate Survey Response Distributions for Global Populations

- COLING 2025: Language Adaptation of Large Language Models: An Empirical Study on LLaMA2

- Findings of EMNLP 2025: Leveraging High-Resource English Corpora for Cross-lingual Domain Adaptation in Low-Resource Japanese Medicine via Continued Pre-training

- arXiv (2024): Domain-adaptative Continual Learning for Low-resource Tasks: Evaluation on Nepali

Related Yenra Articles

See also Automated Legislative Impact Review, E-Governance Platform Analytics, Public Health Policy Analysis, Adaptive User Interfaces, and Community Policing and Crime Prevention.