Text-to-image AI no longer feels like a novelty where you type a sentence, get four surprising pictures, and stop there. As of March 15, 2026, the category is better understood as a set of visual systems: some tuned for striking aesthetics, some for precise editing, some for developer APIs, and some for enterprise workflows where provenance and rights matter as much as image quality. That is a bigger shift than another benchmark win or model-name upgrade.

The older shorthand of "Stable Diffusion versus Midjourney" still explains an important chapter, but it no longer explains the whole market. Midjourney moved deeper into a web-based creative surface. OpenAI folded image generation into a chat-and-API workflow. Black Forest Labs pushed FLUX into a strong developer ecosystem with editing-native models. Adobe turned Firefly into a rights-aware production layer that also brokers partner models. Google expanded Imagen through Gemini and AI Studio. Stability AI kept building for deployable, controllable generation and editing. The field is still moving fast, but it is finally stable enough to map more clearly.

From Diffusion Breakthrough to Product Stack

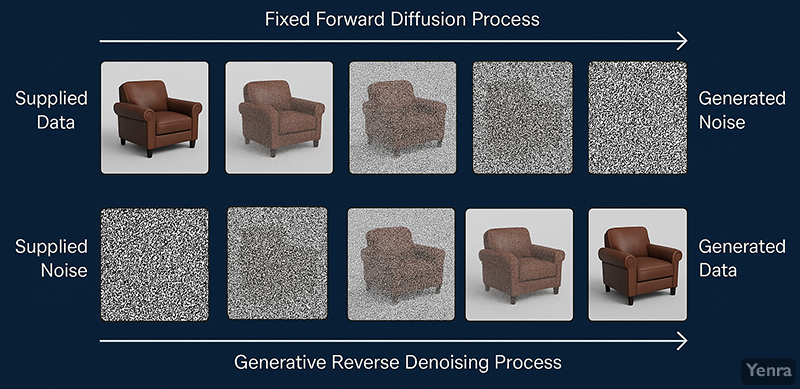

The core technical breakthrough still matters. Modern text-to-image systems were unlocked by diffusion models, which learn to reverse a gradual noising process, and by latent diffusion, which made that process far more practical by generating in a compressed latent space rather than directly in pixels. That combination made high-quality image generation economically usable instead of merely impressive in research papers.

But a strong 2026 product is not just a diffusion model with a prompt box. The useful systems now combine several layers at once: text understanding, image conditioning, local editing, reference-image control, better text rendering, moderation, provenance metadata, and interfaces that support iteration instead of one-shot luck. Even when vendors keep architecture details private, the visible direction is clear. The category is moving from "generate me something cool" to "help me make and revise exactly this."

The Field on March 15, 2026

Broadly, the market now separates into aesthetic-first consumer tools, chat-native editors, open or developer-oriented model ecosystems, creative-suite workflow products, and cloud-platform image services. The major players overlap, but each leans hardest into a different use case.

Midjourney

Midjourney remains the clearest example of an aesthetic-first image tool. Version 7 was released on April 3, 2025 and became the default model on June 17, 2025. Its official updates and docs emphasize better prompt understanding, stronger coherence, and a more capable web workflow rather than just another raw-quality bump. Omni Reference lets users bring a person, object, or creature from a reference image into a new scene, while Draft Mode trades some fidelity for fast, cheap iteration at roughly 10 times the speed of standard generation. That combination explains Midjourney's current niche: it is still one of the best places to explore visual taste quickly, especially when a creator wants striking output without building a full production pipeline.

OpenAI

OpenAI's image work is no longer best understood through the older DALL-E branding alone. On April 23, 2025, OpenAI launched gpt-image-1 in the API, bringing the same multimodal image-generation system used in ChatGPT into developer workflows. On December 16, 2025, OpenAI introduced the new ChatGPT Images experience and made the improved model available in the API as gpt-image-1.5. The official release framing is not only about prettier images. It is about precise editing, better preservation of identity and composition across revisions, improved dense text rendering, and faster generation. In other words, OpenAI's strongest move in this category has been to make image generation feel like part of a general-purpose multimodal assistant rather than a separate toy.

Black Forest Labs and FLUX

Black Forest Labs helped define the developer-facing side of the market after the first Stable Diffusion wave. FLUX1.1 [pro] pushed strong prompt adherence and fast generation, while newer FLUX.1 Kontext models made the more interesting strategic move: they combined text-to-image generation with advanced image editing in one system. Official Kontext docs highlight character consistency, text editing, style transformation, and iterative edits from an existing image, while Kontext [dev] keeps a local-development path open for teams that want more control than a closed consumer product provides. That is why FLUX matters beyond headline image quality. It sits at the intersection of good outputs, practical editing, and deployment flexibility.

Adobe Firefly

Adobe's position is different again. Firefly Image Model 4 and Firefly Image Model 4 Ultra, announced on April 24, 2025, were framed around realism, controllability, and commercial safety, with up to 2K output and stronger control over structure, style, camera angle, and zoom. But the bigger change is that Firefly has become an orchestration layer as much as a model family. Adobe's 2025 and early-2026 materials show Firefly offering both Adobe models and partner models, including OpenAI, Google, and Black Forest Labs. That makes Firefly less like a single-model contest entry and more like a creative operating surface built for moodboarding, app integration, and traceable production. Its distinct value is not that it wins every taste contest; it is that it was designed for real creative organizations that care about workflow, rights posture, and Content Credentials.

Google Imagen

Google's current image story centers on Imagen 4. The company introduced Imagen 4 in paid preview for the Gemini API and Google AI Studio on June 24, 2025, then announced general availability of the Imagen 4 family, including Imagen 4 Fast and Imagen 4 Ultra, on August 15, 2025. Google's own materials emphasize improved text rendering, up to 2K resolution, and a choice between speed, quality, and tighter prompt adherence. DeepMind's current Imagen page also highlights invisible watermarking through SynthID. That makes Imagen especially important at the platform level. It is not just a model in a lab demo or one consumer app feature; it is a cloud-native image stack embedded in Gemini, AI Studio, and the wider Google ecosystem.

Stability AI

Stability AI no longer owns the public conversation the way it did during the original Stable Diffusion moment, but it still matters because so much of the open and deployable image ecosystem runs through its lineage. By late 2025, Stability AI had positioned Stable Diffusion 3.5 Large, Stable Image Ultra, and Stable Image Core as enterprise-ready services on Amazon Bedrock and Azure AI Foundry. On September 18, 2025, the company also emphasized end-to-end image editing through Bedrock-based Image Services such as inpainting, object removal, recoloring, and background removal. That suggests a more grounded role for Stability in 2026: not necessarily the most culturally glamorous image product, but a major provider of controllable generation and editing components for builders.

What Matters Now More Than Prompt Engineering

Prompt engineering still matters, but it no longer carries the whole category. The biggest practical gains now come from everything around the prompt: reference images, character or object consistency, selective edits, better typography, faster draft cycles, and the ability to keep revising without destroying what already works. A creator making ad variants, product scenes, storyboards, or concept art usually does not want infinite novelty. They want controlled variation.

That is why the newest product language across the field sounds different from the early era. Midjourney talks about Draft Mode and Omni Reference. OpenAI emphasizes precise edits and preservation across revisions. FLUX Kontext centers image editing and text editing. Stability AI talks about Image Services. Adobe leans into Boards, partner models, and Content Credentials. Google packages Imagen into a family with Fast and Ultra variants. The market is converging on the same idea from different directions: generation is valuable, but editable generation is where serious work begins.

What Still Breaks

Even the best current systems remain uneven on demanding compositions. Small faces, thin structures, dense text, and exact spatial relationships can still break down. Google's own Imagen materials openly note continuing issues with complicated compositions, small faces, text rendering, and centered layouts. Midjourney's docs also note that some workflows still fall back to older model paths while V7-era features continue to fill in. Black Forest Labs and Stability AI both devote significant documentation to best practices because the models are powerful, not magically reliable.

The legal and authorship questions are also far from settled. "Commercially safe" and "usable under platform terms" are not the same thing as universally risk-free or clearly copyrightable. The U.S. Copyright Office's Part 2 report, released on January 29, 2025, reaffirmed that copyright protection still turns on human authorship. That means a user may have contractual rights to use an output while still facing uncertainty about how strongly that output is protected as a copyrighted work. Provenance systems such as Content Credentials, C2PA metadata, and SynthID help with labeling and traceability, but they do not resolve every ownership or training-data dispute.

There is also a social risk that becomes easier to ignore as tools improve: image editing has become more realistic, more local, and more accessible. That is useful for benign creative work, but it also lowers the barrier for deceptive or manipulative synthetic media. The stronger the editing tools become, the more important policy, disclosure, and product safeguards become alongside model quality.

From Prompt Box to Visual System

The old question was whether AI could turn words into a convincing image. That question has largely been answered. The harder question in 2026 is which systems can support repeatable creative work rather than occasional spectacle. On that front, the field is no longer one race with one winner. It is a layered market.

Seen together, the current landscape is fairly legible. Midjourney is the aesthetic sketchbook. OpenAI is the chat-native editing studio. Adobe is the rights-aware creative surface. Google is the cloud platform play. FLUX and Stability AI are the strongest reminders that developer control and deployable workflows still matter. Text-to-image generation has not stopped being impressive, but its most important evolution is that it is becoming infrastructure for visual creation rather than a standalone trick.

Sources

- Jonathan Ho, Ajay Jain, and Pieter Abbeel, "Denoising Diffusion Probabilistic Models" (2020) - the foundational diffusion-model paper behind the current wave.

- Robin Rombach et al., "High-Resolution Image Synthesis with Latent Diffusion Models" (2022) - the key paper behind the latent-diffusion breakthrough.

- Lvmin Zhang et al., "Adding Conditional Control to Text-to-Image Diffusion Models" (ControlNet, 2023) - a useful reference for the shift toward controllable image generation.

- Midjourney Docs, "Version" - official release timing and feature summary for Midjourney V7.

- Midjourney Updates, "V7 is now the default model!" (June 17, 2025) - the clearest short statement of Midjourney's 2025 default-model shift.

- Midjourney Docs, "Draft & Conversational Modes" - official details on fast iteration and conversational prompting.

- Midjourney Docs, "Omni Reference" - Midjourney's official reference-image feature for V7.

- OpenAI, "Introducing our latest image generation model in the API" (April 23, 2025) - the launch of

gpt-image-1and OpenAI's API-side framing. - OpenAI, "The new ChatGPT Images is here" (December 16, 2025) - the clearest official summary of GPT Image 1.5 improvements.

- Black Forest Labs Docs, "Introduction" - official overview of FLUX.1 Kontext [pro], [max], and [dev].

- Black Forest Labs Docs, "Image Editing" - direct documentation of FLUX Kontext editing, text replacement, and iterative consistency.

- Adobe, "Adobe Revolutionizes AI-Assisted Creativity with Firefly..." (April 24, 2025) - Adobe's main Firefly Image Model 4 and 4 Ultra announcement.

- Adobe Help, "Generate images using partner models" (updated February 27, 2026) - the clearest view of Firefly as a multi-model creative surface.

- Google Developers Blog, "Announcing Imagen 4 Fast and the general availability of the Imagen 4 family in the Gemini API" (August 15, 2025) - Google's official GA announcement for Imagen 4, Fast, and Ultra.

- Google DeepMind, "Imagen" - the current public overview of Imagen 4 capabilities, limitations, and SynthID.

- Stability AI, "Stable Diffusion 3.5 Large is Now Available on Amazon Bedrock" (December 19, 2025) - Stability AI's official positioning of SD3.5 Large, Stable Image Ultra, and Stable Image Core.

- Stability AI, "Stability AI Brings Image Services to Amazon Bedrock..." (September 18, 2025) - a useful official snapshot of Stability's editing-first enterprise direction.

- U.S. Copyright Office, "Copyright and Artificial Intelligence, Part 2: Copyrightability" (January 29, 2025) - the clearest official statement on human authorship and AI-generated works.

Related Yenra Articles

- Consistent Character focuses on one of the hardest practical problems in image generation: keeping a subject stable across many outputs.

- New Lens looks more broadly at how AI changes artistic collaboration rather than any one model family.

- Predictive Evolution of LLMs provides the technical backdrop for why multimodal systems now shape image tools as well as language tools.

- Artistic Creation Tools zooms out from text-to-image to the wider ecosystem of AI-assisted creative software.