OpenAI’s first five years compressed many of the themes that still define AI today: idealism about broad benefit, intense competition for talent, breakthroughs driven by scale, and a growing tension between openness and safety. Looking closely at 2015 through 2020 helps explain not just how OpenAI grew, but how the modern AI industry took shape around it.

The Genesis of an Idea

Late one summer evening in 2015, a small group of tech leaders gathered for dinner at the Rosewood Hotel on Sand Hill Road. Among them were entrepreneur Elon Musk, then–Y Combinator president Sam Altman, and deep-learning researcher Ilya Sutskever. Musk and Altman believed the world needed a new kind of AI research lab – one dedicated not to private gain or secretive military projects, but to ensuring artificial intelligence benefits all of humanity. Over dinner, they spoke openly about the future of AI and whether companies like Google and DeepMind were already building an overwhelming lead. Musk floated the idea of creating a lab to serve as a “counterbalance” to those AI giants. No formal recruiting pitch was made that night, but the idea stuck with Sutskever and helped set OpenAI in motion.

The timing was no accident. The AI landscape in 2015 was in ferment. In the preceding few years, deep learning – neural networks trained on large datasets – had begun to deliver startling breakthroughs. Algorithms were suddenly recognizing images, translating languages, and mastering video games at superhuman levels. A landmark 2012 result by Hinton, Krizhevsky and Sutskever (“AlexNet”) had blown the field wide open, triggering a deep learning revolution in computer vision. Tech giants raced to snap up talent: Google acquired the London startup DeepMind in 2014, and corporate research labs (Google Brain, Facebook AI Research, etc.) were investing heavily. Musk, for one, was growing uneasy. Ever outspoken about the risks of uncontrolled AI, he had publicly warned that developing advanced AI was like “summoning the demon.” He and others feared a scenario where a few big corporations (or governments) had disproportionate control over AGI (artificial general intelligence). If AI was to become as powerful as its proponents believed, they wanted its development to be guided by a broad concern for humanity’s welfare – not just profit or strategic advantage.

It was this mix of excitement and anxiety that gave birth to OpenAI. In the months after the Rosewood meeting, Altman and Musk, along with former Stripe CTO Greg Brockman, began quietly rallying support for an independent AI lab. Brockman had been introduced to Altman earlier that year through Y Combinator’s network. The idea of building safe human-level AI in a project devoted to humanity’s benefit resonated strongly. “Elon and Sam had a crisp vision of building safe A.I. dedicated to benefiting humanity,” Brockman later said. Brockman was convinced enough to leave Stripe and help turn that vision into an operating lab.

Throughout late 2015, Brockman worked feverishly to recruit a founding team. The fledgling lab needed world-class researchers if it was to be taken seriously. The first target was obvious: Ilya Sutskever. Widely regarded as one of the brightest minds in AI, Sutskever had co-authored key papers that ignited the deep learning boom. Brockman cold-emailed him that summer and invited him to the pivotal Rosewood dinner. Shortly thereafter, Brockman and Sutskever met one-on-one in Mountain View. Despite having just met, they clicked. “Ilya is best described as an artist who expresses himself through machine learning,” Brockman wrote, noting that though they came from different worlds – academic research vs. software engineering – they were impressed with each other’s accomplishments and “wanted to learn from each other”. Sutskever believed that top researchers would be drawn to an organization “dedicated to making the best outcome for the world,” while Brockman felt that combining the resources of industry with the open culture of academia could solve otherwise intractable problems. Both were alarmed by the prospect of an AI arms race. “Without intervention, AI will play out like self-driving cars,” Brockman argued – a cooperative start followed by a competitive race once its economic value was clear. But human-level AI, they knew, would be a “transformative technology unlike any other, with unique risks and benefits.” Here, they saw an opportunity to keep the field cooperative – to bring together the best minds to make a responsible attempt at the most important technological breakthrough in history.

By December 2015, the pieces were in place. Musk and Altman had secured $1 billion in committed funding from a who’s-who of Silicon Valley: Reid Hoffman, Peter Thiel, Jessica Livingston, Amazon Web Services, Infosys, and YC Research all pledged support alongside Musk and Altman themselves. (As Altman later clarified, they didn’t plan to spend $1B right away – it was a war chest for the long term.) Sutskever agreed to leave Google to become Research Director, and Brockman would serve as CTO. They assembled a founding team of nine researchers and engineers that blended academic talent and industry savvy. Alongside Sutskever came John Schulman, freshly minted PhD from Berkeley and an expert in reinforcement learning, who “immediately knew” that an institute combining “the openness and mission of academia with the resources of private industry” was exactly what he wanted to join. Wojciech Zaremba, a Polish PhD student at NYU, signed on despite “borderline crazy” offers from elsewhere – tech giants were bidding two or three times market rate in an eleventh-hour scramble to keep such talent from defecting. (He turned them down; mission mattered more than money.) Rounding out the group were Andrej Karpathy (a Stanford computer vision whiz), Diederik “Durk” Kingma (inventor of a popular neural network technique), Trevor Blackwell (a robotics expert and YC partner), Vicki Cheung (an accomplished engineer), and Pamela Vagata (an experienced infrastructure engineer). OpenAI’s founding roster was impressive – and notably, nearly all of them had given up lucrative jobs or offers to join a non-profit startup with no product and purely idealistic goals.

In early December 2015, OpenAI was officially announced to the world. On December 11, Altman, Musk and the team unveiled OpenAI’s formation at the Neural Information Processing Systems (NeurIPS) conference in Montreal – timing it for maximum impact. (In fact, so many rival firms tried to hire away OpenAI’s people during the conference that the announcement had to be delayed until the end of the week, once all commitments were firm.) In a blog post titled “Introducing OpenAI,” the founders laid out an uncompromising mission. “OpenAI is a non-profit artificial intelligence research company,” it began. “Our goal is to advance digital intelligence in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return.” Free from profit motives, they argued, the organization would be better positioned to focus on “a positive human impact.” They openly worried about both the promise and perils of AI: human-level AI could benefit society immensely, but it was “equally hard to imagine how much it could damage society if built or used incorrectly,” the post warned. Given AI’s unpredictable leaps, it was important to have an institution “which can prioritize a good outcome for all over its own self-interest” when the day of advanced AI arrived. OpenAI, they declared, aimed to become “such an institution.”

The Founding Ethos – Openness, Cooperation, and Safety

From the outset, OpenAI wrapped itself in a mantle of idealism. The ethos articulated by its founders was radically different from the typical Silicon Valley startup or secretive corporate lab. OpenAI pledged to share its research openly with the world. “Researchers will be strongly encouraged to publish their work, whether as papers, blog posts, or code,” the founding blog post promised, “and our patents (if any) will be shared with the world.” This commitment to openness was baked into the organization’s very name. The creators believed that AI should be an extension of individual human wills and, in the spirit of liberty, as broadly and evenly distributed as possible. In other words, if AI was to be the most transformative technology of our time, its benefits should not accrue only to a select few. By openly sharing progress, OpenAI hoped to prevent a scenario where powerful AI systems were the exclusive province of a single company or government.

This principle of openness was intertwined with a commitment to safety and ethical development. Altman and Musk often framed OpenAI’s mission in terms of preventing AI dystopias. In interviews around the launch, they emphasized making AI safer by democratizing it. When a Wired reporter asked why releasing AI discoveries to everyone wouldn’t simply empower bad actors, Altman responded that he had faith in humanity: most people are good, he reasoned, and if everyone has access to advanced AI, the few who might misuse it would be overwhelmed by the vastly greater number using it for good. (Musk acknowledged it was a “good question,” but Altman’s optimism carried the day.) This outlook—cautious optimism in the fundamental goodness of people—guided OpenAI’s early approach to sharing. It was a calculated bet that transparency, not secrecy, would maximize the chances of AI doing more help than harm.

Another founding ideal was the notion of cooperation over competition. OpenAI’s creators were deeply influenced by the history of research labs like Xerox PARC, which had pioneered personal computing in an open, collegial atmosphere. In fact, in the run-up to OpenAI’s launch, Brockman and Sutskever had dinner with computing legend Alan Kay (of Xerox PARC fame). Kay regaled them with stories of “living in the future” – how his team built the Alto system by using technology that was a decade ahead of its time. After the meal, an awestruck Ilya Sutskever confessed, “I only understood 50% of what he said, but it was all so inspiring.” For the young OpenAI leaders, PARC’s example helped validate their own hypotheses about creating an impactful organization that blended research and engineering. They envisioned a lab where PhDs and master coders worked side by side, undistracted by bureaucracy or commercial deadlines.

Indeed, OpenAI explicitly sought to marry the culture of academia with the intensity of a startup. “We saw an opportunity to gather many of the best researchers to make a responsible attempt at [AGI],” Brockman wrote, by “combining the openness and mission of academia with the resources of private industry.” In practice, that meant recruiting top talent with the promise of academic freedom (publish openly, pursue long-term research) but providing them industry-level support – powerful computing resources, significant funding, and a nimble engineering team to turn ideas into results. In late 2015, OpenAI formalized this hybrid model by structuring as a non-profit research lab. As a non-profit, their “aim is to build value for everyone rather than shareholders,” they announced, stressing that benefiting humanity writ large was the north star. The lab’s Charter, published a few years later in 2018, further codified these principles: it spoke about avoiding AI arms races, cooperating with any entity that achieved a breakthrough that could lead to AGI, and stopping work on any project that went against the broad benefit of humanity. While no one knew how the future would unfold, the founders wanted OpenAI’s values clearly established from day one.

Crucially, safety was not just a talking point but an active area of research. From the beginning, OpenAI invested in exploring how to align AI systems with human values. They hired researchers (like Paul Christiano and Dario Amodei a bit later) to work on AI safety and alignment strategies. This meant that even as some team members built powerful algorithms, others probed how to detect and mitigate bias, or how to have AI systems learn from human feedback to ensure they behaved in beneficial ways. The idea of “shared benefit” was front and center: if they ever created an AGI, OpenAI pledged, it would be made available to the world and its advantages shared evenly. In Altman’s and Musk’s view, the only acceptable outcome was one where everyone could benefit from AI advances – a direct response to the concentration of AI power in a few big tech companies. Musk explicitly saw OpenAI as a check on Google’s dominance in AI talent and resources. He had been alarmed by Google’s acquisition of DeepMind and wanted to “challenge [Google’s] monopoly on A.I. talent” by creating a compelling alternative path for researchers.

All these lofty ideals – openness, safety, cooperation, global benefit – set a tone that attracted talented people who believed in the cause. “I wanted to do research in AI, and I thought OpenAI was ambitious in its mission and was already thinking about AGI,” John Schulman said of why he joined the founding team. That sentiment was likely shared by many of his colleagues. They weren’t just building another product or chasing academic prestige; they genuinely saw themselves as guardians of the future, trying to ensure AI would be more Asimov’s “positronic” helper and less Skynet. It was an audacious experiment: could a small independent lab really compete with the Googles and Facebooks – and do so while giving away its secrets for the common good?

Inside the Early Lab: “A Startup Academic Department”

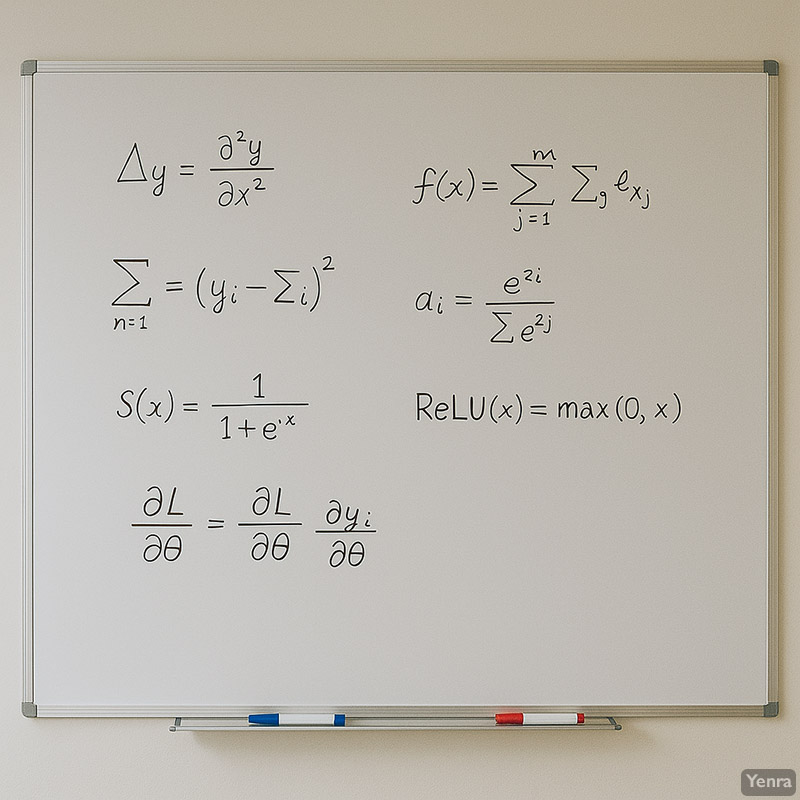

On January 4, 2016, the OpenAI team gathered for their first official day of work – not in a shiny lab, but in Greg Brockman’s modest San Francisco apartment. This makeshift office had barely enough room for everyone’s laptops, and, as it turned out, no whiteboard. During a discussion that first day, Sutskever and Schulman turned to scribble down an idea and found only blank walls. Brockman hurried out and came back with a large whiteboard and markers. Problem solved. In many ways, that scene captured the scrappy spirit of OpenAI’s early culture: they were figuring things out as they went, improvising solutions with whatever was at hand, and doing it with a sense of excitement and humor. (A photo from those early weeks shows a handful of team members sprawled on couches and chairs in Brockman’s living room, laptops open, the newly acquired whiteboard already filled with notes in the corner.)

They were nine people with a moonshot mission, crammed into an apartment with no settled playbook for how to create human-level AI. The working assumption was that putting strong researchers and engineers in the same room would generate new ideas quickly. That uncertainty bred a kind of creative chaos. Researchers pursued a grab-bag of projects: teaching agents to play videogames, training robots to grasp objects, generative models that made pixelated images – whatever seemed promising enough to try. At times little worked. Brockman later described 2016 as a period of trial and error where progress was elusive. But the team had intellectual freedom and a long runway, which made OpenAI feel more like a research playground than a corporate office. Musk and Altman checked in regularly, but there was no immediate pressure to ship a product or turn a profit. The mandate was simply to do important research.

Amid this free-form exploration, a few norms and practices took shape. The team held daily reading groups to stay up-to-date on the latest AI papers. These sessions often sparked new ideas or debates. Engineering and research were considered equally important – a core part of OpenAI’s philosophy. Unlike some academic labs where software engineers are second-class citizens, OpenAI treated its engineers as peers to the PhD researchers. Brockman, the CTO, set the tone: one moment he’d be ordering office supplies or setting up servers, the next he’d be knee-deep in coding a new tool for the team. “Technical leadership should call the shots while still doing hands-on technical work,” he believed. For January 2016, Brockman’s self-assigned job was to “remove all non-research tasks from Ilya’s plate” – channeling the myth from The Mythical Man-Month of an engineer who offloads every distraction so the core team can focus purely on invention. So while Sutskever dove into high-level strategy, Brockman and Vicki Cheung scrambled to build the basic infrastructure: procuring servers, setting up a Kubernetes cluster for experiments, creating Google accounts, and generally spinning up the new lab’s tech stack. In those first weeks, Brockman even pored over Ian Goodfellow’s Deep Learning textbook cover to cover – partly to educate himself (his background was in software engineering, not ML research) and partly as a clever recruiting ploy (Goodfellow was impressed by Brockman’s copious notes and soon joined OpenAI himself).

The atmosphere in the apartment-office was intense but exhilarating. The team often worked from morning to late night, fueled by enthusiasm (and plenty of takeout). “These are the times I feel most alive,” Brockman wrote of all-night coding sprints, describing how “coding feels like magic made real” when you can focus from wake until sleep and see ideas become reality. One early priority was building common tools to accelerate everyone’s research. For example, the idea for OpenAI Gym – a standardized toolkit for reinforcement learning – came from Wojciech Zaremba during one of their brainstorming sessions. At the time, everyone was writing their own ad-hoc code to test AI agents in games and tasks. Zaremba suggested creating a library of environments (like Atari games, simulations, robotic tasks) so the whole field could benchmark algorithms more easily. Schulman and Brockman agreed it would be useful, but initially thought it might take the better part of a year to polish Gym for public release. Brockman decided to tackle it head-on: he effectively locked himself in coding mode, adopting a “single-minded focus” strategy he’d honed at Stripe – working around the clock and inspiring others to chip in. Ilya took over some of Brockman’s managerial duties so Greg could hack on the Gym code full-time. Within a few intense weeks – much faster than anyone expected – they had a working version. In April 2016, OpenAI launched Gym to the public as one of its first major open-source contributions. It was a splashy debut: researchers worldwide applauded the tiny lab for giving away a tool that made everyone’s experiments easier.

With Gym out, the team ambitiously moved to an even bigger project: Universe, a software platform to train AI agents on any task you could do with a computer – from playing Flash games to navigating websites. The concept, pitched by Andrej Karpathy during an offsite retreat in Napa Valley, was to create a “virtual playground” of thousands of diverse tasks. If an AI could learn its way around the Universe, maybe it would be a step toward general intelligence. In the fall of 2016, OpenAI’s entire crew rallied to build and release Universe. At a group offsite that October – the first time all the new hires met together in person – the energy was electric. “Everyone clicked in a really visceral way, and the ideas and vision were flowing,” Brockman said of that retreat. (It was during a van ride back from a day of hiking among Napa’s vineyards that Karpathy floated the idea for Universe. Stuck in traffic on a winding road, the team barely noticed the delay because they were so deep in conversation about the future of AI.) A photo from the offsite shows a dozen of them strolling down a country lane at dusk, engaged in animated discussion, with the golden hills of California in the background – a picture of collegial idealism.

As 2016 turned into 2017, OpenAI moved out of Brockman’s apartment into a real office in San Francisco. But the startup scrappiness and academic vibe remained. The day-to-day rhythm was still defined by whiteboards, experiments, and argument: long stretches of trial and error punctuated by sharp debates over what to pursue next. What made the lab unusual was less any one office detail than the operating model itself: researchers with unusual freedom, engineers treated as peers, and leadership trying to clear away distractions so technical work could compound quickly.

Despite the lack of strict hierarchy, certain personalities emerged as informal leaders. Sutskever, though shy and soft-spoken, was the intellectual compass – if Ilya was excited about an idea, everyone paid attention. Brockman was the operator, doing whatever was needed to keep momentum. Others like Dario Amodei took on project leadership around safety and larger language-model efforts. Meanwhile, Vicki Cheung and Jonas Schneider helped build the cloud infrastructure that let researchers work at increasing scale, and Jack Clark translated the lab’s work for the outside world while pushing internally on strategy and ethics. OpenAI hired from eclectic backgrounds and leaned into that diversity of thought as part of its culture.

Breakthrough Moments: From Games and Robots to Language

In its first few years, OpenAI’s explorations began to coalesce into a few domains where they would make landmark advances. The lab’s breakthrough moments spanned different facets of AI – from teaching agents to play complex games, to training robots that manipulate the real world, to pioneering the era of large-scale language models. Each success was hard-won, often following many failures, and each left the team both exhilarated and humbled by what they were learning.

One of the earliest public triumphs came in August 2017 at The International, the premier Dota 2 tournament in Seattle. OpenAI’s bot defeated pro player Dendi in a live best-of-three 1-vs-1 match under standard tournament rules. In its announcement, OpenAI said the system had learned entirely through self-play, without imitation learning or tree search, and framed the result as a step toward AI systems that can pursue goals in “messy, complicated situations involving real humans.” The milestone mattered because Dota 2 combined hidden information, fast reactions, and strategic deception in a way that felt much closer to the real world than earlier benchmark games.

OpenAI then extended the project to 5-vs-5 full Dota 2, creating a team of agents called OpenAI Five. In April 2019, OpenAI Five played reigning world champion team OG at the event it called OpenAI Five Finals. OpenAI later summarized the milestone more precisely in its technical write-up: after 10 months of training, OpenAI Five became “the first AI system to defeat the world champions at an esports game.” The significance was less the spectacle than the technical lesson. Large-scale self-play reinforcement learning could handle long time horizons, imperfect information, and real-time multi-agent coordination at a level that had seemed out of reach only a few years earlier.

While some of the team focused on virtual games, another contingent tackled the physical world through robotics. OpenAI’s robotics group, led in part by Wojciech Zaremba in the early days, believed that general-purpose robots were a crucial stepping stone to AGI. Humans can use their hands with remarkable dexterity to solve myriad tasks; could an AI-controlled robot hand learn to solve new tasks without hard-coding? In 2017, the robotics team set an audacious goal: teach a five-fingered robotic hand to solve a Rubik’s Cube. This would require fine motor skills, planning, and extreme robustness to disturbances – something no robot had ever done. For years, progress was slow. By mid-2018, the best they’d achieved in reality was getting the robot hand to dexterously manipulate a simple block. But they pressed on. Their approach was to train neural networks entirely in simulation (where they could run thousands of experiences in parallel) and then transfer that knowledge to the real robot – a method requiring a technique called Automatic Domain Randomization to ensure the AI could handle the noise and chaos of the physical world.

Finally, in October 2019, OpenAI announced success: their system had solved the Rubik’s Cube one-handed with a robotic hand. A striking demonstration video showed the black robotic hand twirling the cube’s faces and eventually holding up a solved cube. The feat grabbed headlines globally – it was exactly the kind of flashy result that ignites the public imagination about AI. Under the hood, the AI had learned to deal with unexpected situations; in one test, they even prodded the robot hand with a plush giraffe toy as it worked, trying to perturb it. It simply re-stabilized and kept solving. This robustness was unprecedented. The team proudly noted that the same reinforcement learning algorithms and codebase behind OpenAI Five had been applied here. “This shows that reinforcement learning isn’t just a tool for virtual tasks, but can solve physical-world problems requiring unprecedented dexterity,” the OpenAI blog declared. After the demo, members of the robotics squad recalled feeling emotional – years of perseverance had paid off. “We’ve reached our initial goal,” they wrote simply in the release, after recounting the multi-year journey from manipulating a block in 2017 to cracking the cube in 2019. It was a testament to OpenAI’s culture of tackling hard problems with long-term focus. (Interestingly, not long after, OpenAI decided to wind down its robotics research – the resources needed were immense, and they saw even greater promise elsewhere. In hindsight, though, the Rubik’s Cube accomplishment stands as an iconic moment from the lab’s formative era.)

Perhaps the most consequential breakthroughs at OpenAI, however, were happening in the realm of language – giving rise to the now-famous family of GPT models. Around 2016, language generation was not yet the obvious center of gravity at the lab. Early models often lost coherence quickly. But researcher Alec Radford kept pushing on the problem, exploring whether next-step prediction on large text corpora might yield representations useful for much more than autocomplete. Some early attempts on broader internet text were unconvincing, but the work established a line of inquiry OpenAI would keep following.

A more focused result arrived in 2017 with OpenAI’s “unsupervised sentiment neuron” work. Training a character-level model to predict the next character in 82 million Amazon reviews, the team found that the network learned an internal representation of sentiment without being explicitly taught the concept. OpenAI reported that a simple linear model on top of that representation reached state-of-the-art sentiment-analysis accuracy on the Stanford Sentiment Treebank, and that much of the signal could be traced to a single highly predictive unit inside the network. The result suggested that large generative models might learn surprisingly abstract features as a byproduct of next-step prediction.

The decisive architectural shift came in 2017, when the Transformer made it much easier to train powerful language models in parallel. OpenAI moved quickly to adopt the approach. From there, the lab’s language work became more legible: scale the model, scale the data, and see what general-purpose capabilities emerge. That meant moving away from many small experiments toward a smaller number of compute-heavy bets.

This shift also required a cultural change at OpenAI. The lab had to pivot from trying lots of small ideas to doubling down on a few big bets that demanded much more computation. Progress in language was starting to depend as much on infrastructure and scale as on new model ideas. OpenAI’s leadership was willing to make that bet, and the organization gradually reoriented around training larger, more general models.

By mid-2018, OpenAI had its first GPT result. The model later known as GPT-1 established the basic pattern of generative pretraining followed by task-specific fine-tuning. OpenAI described it as a large Transformer trained on only a few thousand books, roughly 5GB of text, yet it still achieved strong results across several language-understanding benchmarks. More important than any single benchmark was the pattern: a single pretrained model could transfer surprisingly well to multiple downstream tasks. That finding helped convince OpenAI that scale in language models was not just improving performance but changing the character of what the models could do.

Encouraged, OpenAI forged ahead on the scaling path. In early 2019, they unveiled GPT-2, a model an order of magnitude larger (1.5 billion parameters) trained on an even more expansive dataset of roughly 8 million web pages – essentially a sizable slice of the public internet. GPT-2 was a breakout moment for AI in the public consciousness. In February 2019, OpenAI published a blog post titled “Better Language Models and Their Implications”. The tone was measured, but what it described was extraordinary. “We’ve trained a large-scale unsupervised language model which generates coherent paragraphs of text,” they wrote, noting it attained state-of-the-art results on language tasks “all without task-specific training.” In other words, GPT-2 could write impressively lucid text on almost any topic – you just prompted it with an opening sentence, and it could continue with news-like articles, fiction-style stories, even rudimentary translations or coding suggestions. Crucially, GPT-2 learned these skills simply from reading the internet, not from being explicitly taught each skill. This was a vindication of the “universal learner” idea the team had chased. When samples of GPT-2’s writing were released, many were stunned by how coherent and on-topic they were. The model would sometimes ramble or go off the rails, but often it produced a solid few paragraphs that could pass as written by a human – something virtually unimaginable just a couple years prior.

Yet OpenAI’s announcement of GPT-2 was notable for another reason: they did not release the full model. Citing concerns about misuse – the possibility that such a system could be employed to generate fake news, spam, or disinformation at scale – the lab took the unprecedented step of withholding the trained model and releasing only a smaller, toned-down version for researchers. They framed it as an “experiment in responsible disclosure”. This decision sparked intense debate in the AI community. Some praised OpenAI for living up to its safety-first ethos; others criticized it for betraying the “open” in OpenAI and overhyping the dangers. Within the lab, the choice had not been taken lightly. It reflected an internal tension between two core values: openness and safety. The founding ethos said share everything – but not if sharing itself could cause harm. This was the first real test of that principle. In the end, they erred on the side of caution. As Dario Amodei (who led the GPT-2 effort) explained in interviews, the team saw potential malicious applications and wanted to set an example of restraint until more was understood about these powerful models. It was a coming-of-age moment for OpenAI. The company that started with an almost naïve optimism about open research now had in its hands a technology that made even its creators slightly uneasy. “We’re not saying we know the correct answer here,” Jack Clark said at the time, “We’re trying to navigate an area with a lot of uncertainty.” In the months that followed, OpenAI actually did a staged release – gradually releasing larger versions of GPT-2 as they monitored how it was used and as detection techniques improved. By November 2019, they eventually released the full 1.5B model, noting no signs of widespread misuse in the interim. This measured approach won the lab a bit of trust in the AI safety community: it showed they were willing to hold back on publication if circumstances warranted, a stance that would have been anathema in traditional academia but one that aligned with their charter’s emphasis on avoiding “unacceptable safety risks” to society.

Amid the GPT-2 controversy, OpenAI was quietly working on the next leap. They understood that GPT-2, while impressive, was still far from any general intelligence – it had glaring weaknesses (like poor factual accuracy and logical reasoning). But they believed scaling further would yield diminishing returns unless they dramatically boosted computation. Training GPT-2 had already stretched their resources. To go bigger, they’d need way more computing power – and money. In March 2019, OpenAI made a pivotal structural change: it transitioned from a purely non-profit entity to a capped-profit hybrid model. This restructuring allowed them to take in investors and pay out returns up to a certain cap, while ostensibly keeping the non-profit mission in charge. The motivation was straightforward – they needed billions of dollars for the compute and talent to reach AGI, and their non-profit bank account alone wasn’t sufficient. Not long after, in July 2019, OpenAI announced a major partnership with Microsoft, which invested $1 billion into the company and agreed to provide deep integrations with its Azure cloud platform for AI training. This infusion of resources directly enabled the training of GPT-3 – the next giant leap in the GPT series.

And so, in 2020, OpenAI built a far larger language model. In their GPT-3 paper, Brown and colleagues described GPT-3 as an autoregressive model with 175 billion parameters, evaluated in the few-shot setting without gradient updates or fine-tuning. That scale gave the model a much broader range of behaviors than GPT-2: it could continue essays, answer questions, imitate styles, generate code-like text, and adapt to new tasks from examples placed directly in the prompt. GPT-3 still made obvious mistakes, but it clarified why OpenAI had been willing to reorganize itself around large-scale language modeling. The scaling strategy was no longer just a theory; it was producing capabilities that felt qualitatively broader.

The OpenAI researchers felt they were witnessing the emergence of something qualitatively new. Some were thrilled; some were unnerved. When beta access to GPT-3 reached outside developers in mid-2020, the broader tech world responded much the same way. Demos of GPT-3 generating webpages, fiction, summaries, and code spread quickly, even as its failures remained obvious. Altman himself cautioned that the model still made serious mistakes, but he also called it an early glimpse of how much AI could change the world. In GPT-3, OpenAI saw a validation of the scaling bet that had guided its work since the transformer breakthrough: larger models trained on broader corpora could produce capabilities that surprised even the people building them.

By the time GPT-3 emerged, OpenAI’s formative years were effectively over. In the span of just five years – 2015 through 2020 – the little non-profit lab with a handful of researchers had become one of the world’s most influential AI institutions. It had already answered some questions, raised harder ones, and helped set the terms of the debate about what advanced AI should be for and how it should be handled.

Legacies of the Early Years

OpenAI’s later trajectory – the rise of products like ChatGPT, the company’s increasingly commercial posture, and the governance questions that followed – lies beyond the scope of this story. Yet many of its defining strengths and contradictions were already visible by 2020.

One clear legacy is the emphasis on long-term, ambitious research. OpenAI resisted short-term temptations (no rush to monetize, no quick-turnaround client projects) during its early years, and this patience enabled breakthroughs like the GPT series. By operating initially as a non-profit with ample funding, the team had the freedom to pursue a risky hypothesis – that a single massive neural network can develop broad intelligence – and carry it through multiple generations. That academic-style freedom, combined with the scale of industry resources, was exactly what Brockman and Altman had hoped to achieve. It’s hard to imagine a purely corporate lab being allowed to spend millions on speculative research that might not pay off for years. Yet OpenAI’s early culture and structure did just that. The fact that GPT-3 was even built is a direct legacy of the early decision to prioritize big, society-shifting bets over incremental progress. As Altman would later put it, “we knew why we wanted to do it, even if we didn’t know how” – a mindset that persisted and led them to chase scale as the “how.”

Another legacy is OpenAI’s evolving stance on openness and safety, and finding the balance between them. The GPT-2 release debate in 2019 was a crucible that tested the lab’s values. OpenAI’s choice to hold back the full model – controversial as it was – set a precedent for the industry on handling powerful AI responsibly. It showed that OpenAI was willing to adapt its interpretation of “open” in service of its higher mission to ensure positive outcomes. In the years since, that willingness to restrict information for safety has only grown (for instance, as of 2020 they began offering models via an API service rather than open-sourcing them, citing misuse concerns). While some see this as a betrayal of the original ideal, it’s also a product of the nuanced understanding the team gained in those early years: transparency can be double-edged, and responsibility sometimes means choosing not to release something dangerous. The OpenAI Charter penned in 2018 already hinted at this, stating they would “avoid cutting corners on safety” and might reduce the dissemination of research if it posed an undue risk. In a real sense, the formative years taught OpenAI how to put its principles into practice, even when it meant making unpopular calls. This learning – that ideals must meet reality – became part of OpenAI’s institutional DNA.

The early lab also left a legacy of tools and norms that benefited the wider AI community, reflecting its commitment to shared benefit. OpenAI Gym, Universe, the strategy of publishing research blogs with full code, and even the concept of “OpenAI Baselines” (releasing reference implementations of algorithms) all stemmed from the lab’s founding ethos. By 2020, Gym had become a standard toolkit in reinforcement learning research, used by countless students and engineers. This open-source culture helped cement OpenAI’s reputation as a community-driven lab, not a siloed one. Many of the “best practices” in AI research dissemination today – like releasing model weights with papers, or writing lucid blog explanations for complex research – were championed by OpenAI in its formative period. That impact on how AI research is done and shared is a significant if less tangible legacy.

Equally important are the people that those early years forged. OpenAI’s founding team formed a tight-knit brain trust, many of whom remain influential in the company (and the field) to this day. Ilya Sutskever stayed on as Chief Scientist, a guiding force behind the lab’s research direction. Greg Brockman grew into a leadership role as company President, often the public explainer of OpenAI’s vision. Others like John Schulman emerged as key figures – Schulman headed the reinforcement learning team that later trained ChatGPT via human feedback, a direct outgrowth of the techniques he and others were exploring in 2017. Even those who eventually left carried the culture with them: Andrej Karpathy went on to lead AI at Tesla, taking a spirit of open research into industry; Dario Amodei, who had co-led OpenAI’s safety and GPT-2 work, left to found Anthropic, a new AI safety-focused startup, arguably propagating OpenAI’s ethos in another organization. The fact that OpenAI’s alumni network is now sprinkling its DNA across the tech world is itself a legacy of the lab’s early influence.

One might also argue that OpenAI’s formative experience of beating the odds – recruiting top talent against big competitors, achieving world-firsts with a small team – instilled a confidence (some might say audacity) that continues to define it. That “Rosewood Hotel spirit” from the night the idea was born, when a few people dared to think they could shape the future of AI, lives on. It lives on in the lab’s willingness to tackle grand challenges like multi-agent teamwork (OpenAI Five) and in its insistence on being at the forefront of AGI development. Musk’s departure in 2018, reportedly after conflicts over OpenAI’s direction and his attempt to take more control, was a bump in the road, but it didn’t derail the lab – if anything, it galvanized the remaining team to prove they could succeed on their own terms. The ideals set in the beginning provided a north star through such tumult. As of the early GPT-3 era, Altman frequently reminded employees of OpenAI’s mission: to ensure AGI benefits all humanity. That wording was almost identical to what they had written in 2015. The core dream had stayed surprisingly constant even as strategy and structure evolved.

In a reflection written two years after OpenAI’s founding, Greg Brockman mused on what they were trying to build. He evoked a story by Heinlein of a project to reach the moon, where the chief engineer avoids all distractions to focus on the technical challenge. That, Brockman said, struck him as “the right way to run a project to build AI” – let the technical leaders lead, and shield them from anything that gets in the way. In OpenAI’s formative years, one can see how they endeavored to do just that. They created a bubble of focus for a small group of passionate people to chase a world-changing goal. In doing so, they recaptured some of the magic of historic research labs and made it their own. They learned that sometimes the biggest breakthroughs come not from a flash of genius, but from relentless scaling and refining; that a tight team can outpace far larger organizations if united by a mission; and that to responsibly handle powerful technology, one must constantly revisit one’s principles.

By 2020, the experiment that began as a nonprofit counterweight to concentrated AI power had already changed the field. OpenAI had helped normalize open tooling, pushed reinforcement learning into public view, and demonstrated that scaling language models could unlock startling new capabilities. It had also discovered, firsthand, that its own founding ideals would sometimes collide: openness with safety, mission with compute economics, research culture with institutional power.

That tension is what makes OpenAI’s early years so revealing. This is not just a story about breakthrough models. It is a story about how an institution forms around a fast-moving technology before anyone fully understands where that technology will lead. The choices OpenAI made between 2015 and 2020 became part roadmap, part warning for the rest of the AI industry.

Sources

- OpenAI, “Introducing OpenAI” (December 11, 2015) – founding mission, initial team, and the announced funding commitment.

- Cade Metz, “Inside OpenAI, Elon Musk’s Wild Plan to Set Artificial Intelligence Free” (WIRED, April 27, 2016) – the Rosewood dinner, recruiting effort, and delayed public launch.

- Greg Brockman, “#define CTO, OpenAI” (January 9, 2017) – the apartment office, first whiteboard, Gym, Universe, and early operating culture.

- OpenAI Charter (2018) – broad benefit, long-term safety, and cooperation principles.

- OpenAI, “Dota 2” (August 11, 2017) – the Dendi 1-vs-1 milestone and OpenAI’s framing of the result.

- OpenAI, “OpenAI Five defeats Dota 2 world champions” (April 15, 2019) – the match against OG.

- OpenAI, “Dota 2 with large scale deep reinforcement learning” (December 13, 2019) – training duration and the world-champions milestone in technical context.

- OpenAI, “Learning dexterity” (July 30, 2018) – the robotics program before the Rubik’s Cube result.

- OpenAI, “Solving Rubik’s Cube with a robot hand” (October 15, 2019) – the final dexterity milestone and sim-to-real setup.

- OpenAI, “Unsupervised sentiment neuron” (April 6, 2017) – the Amazon-reviews experiment and the sentiment-neuron finding.

- OpenAI, “Improving language understanding with unsupervised learning” (June 11, 2018) – GPT-1 and the pretraining-plus-fine-tuning framework.

- OpenAI, “Better language models and their implications” (February 14, 2019) – GPT-2 scale and staged release.

- OpenAI, “OpenAI LP” (March 11, 2019) – the capped-profit restructuring and its stated rationale.

- Tom B. Brown et al., “Language Models are Few-Shot Learners” (arXiv, May 28, 2020) – GPT-3 size and few-shot performance framing.

Related Yenra Articles

- LLM Introduction connects company history to the models that later reshaped AI use.

- Next Word Prediction shows one technical thread that became central to that story.

- Infrastructure adds the scale and compute context behind major breakthroughs.

- Techno Optimism places early OpenAI history inside a larger debate about tech futures.