The strongest AI tools for film and video editing in 2026 are assistant layers for rough-cut assembly, masking and tracking, lip-synced localization, automatic speech recognition, searchable footage, content-aware encoding, and multimodal learning across audio, text, and image signals. The current ground truth is that AI is very good at repetitive post-production tasks and media retrieval, while editors still make the essential decisions about rhythm, emotion, intent, and what a cut should actually mean.

1. Automated Editing

Automated editing is becoming useful where the system can help assemble a first pass from transcripts, speaker changes, or multicam footage without pretending it can replace an editor's sense of story. AI is strongest at rough cuts, not final cuts. That makes it valuable for interviews, documentaries, social cutdowns, and multicam workflows where the first challenge is reducing raw material to a workable timeline quickly.

Blackmagic's current DaVinci Resolve release is a strong official grounding source because it openly frames AI as workflow assistance: AI IntelliScript can build timelines from text scripts, AI Multicam SmartSwitch can choose angles from speaker detection, and AI Audio Assistant can build a starting mix. Inference: automated editing is now strongest as timeline assembly and triage, not autonomous storytelling.

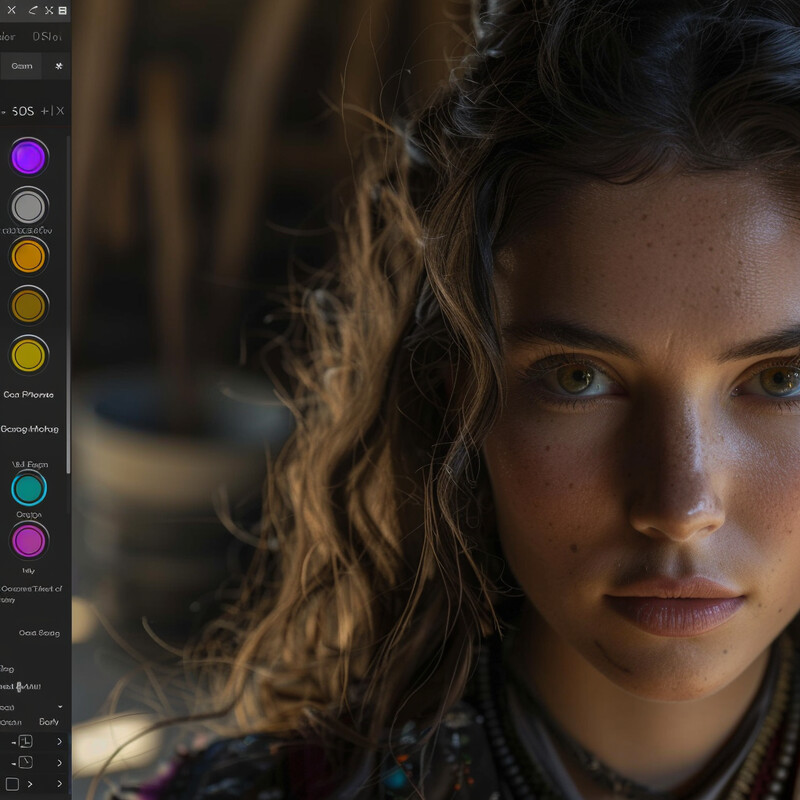

2. Color Correction and Grading

AI color tools are strongest when they automate isolation, tracking, and cleanup so colorists can spend more time on look decisions. Instead of manually tracing a face or object across a shot, editors can now use AI masks, depth maps, and refinement tools to speed routine work. The result is not automated taste. It is faster access to the parts of the frame that the colorist wants to shape.

DaVinci Resolve Studio and the DaVinci Neural Engine are the clearest current grounding sources here because they package Magic Mask, Depth Map, face refinement, and other AI-assisted grading tools into mainstream post-production software. Inference: AI is no longer a novelty layer in grading. It is part of the everyday masking, tracking, and refinement toolkit.

3. Audio Syncing

Audio syncing is getting stronger because AI can now help align dialogue, normalize ADR, and support visually convincing dubbed performances. The most practical shift is not magic audio repair. It is that sync correction, voice matching, and multilingual dialogue replacement can now happen inside real post workflows with much less manual labor.

Flawless AI's product and localization pages are strong operational sources because they show lip-synced dubbing as a live commercial workflow, while Blackmagic's AI Dialogue Matcher reflects the same trend on the editing-suite side. Inference: AI syncing is now most credible in ADR, dubbing, and dialogue-matching tasks where timing precision matters but creative intent still needs supervision.

4. Visual Effects Integration

AI is making VFX integration more practical by reducing the amount of frame-by-frame labor required for common compositing tasks. Object isolation, tracking, depth estimation, and clean-up are increasingly handled by AI-assisted tools, which lets artists spend more time on shot design and less on repetitive preparation. This is a genuine post-production gain, even though it does not eliminate the need for VFX supervision or artistic review.

Blackmagic's official Resolve pages are enough to show the current ground truth here: Magic Mask, object removal, depth-aware tools, and tracking are no longer experimental concepts. Inference: AI is strongest in VFX when it accelerates prep and cleanup for human artists instead of claiming to generate finished visual effects on its own.

5. Content-Aware Editing Tools

Content-aware editing tools matter because editors increasingly need to reframe, crop, isolate, or remove elements without hand-keyframing every change. AI can now track a subject, maintain framing, detect scene boundaries, and remove distractions with much less setup. This is especially valuable when editors are repurposing footage across aspect ratios or cleaning shots under time pressure.

The Resolve Studio feature set is a strong official anchor because it explicitly includes smart reframe, object removal, shot detection, and object isolation. Inference: content-aware editing is now a real post-production category built around subject tracking and scene understanding rather than a marketing slogan about "smart editing."

6. Enhanced Frame Interpolation

Frame interpolation is strongest today as a rescue and finishing tool: smoothing speed changes, improving de-interlacing, and helping low-frame-rate material feel more usable in modern pipelines. AI does not make every interpolated frame perfect, especially around difficult motion, but it has materially improved the quality of slow motion and restoration workflows. That makes it practical for editors who need better motion handling without re-shooting material.

Resolve Studio is a useful official source here because it specifically highlights advanced optical flow for smoother speed changes along with AI de-interlacing and SuperScale. Inference: enhanced interpolation is now a practical quality-improvement layer in edit and finishing pipelines, even though difficult motion still needs human review for artifacts.

7. Subtitles and Closed Captions

Captions are now one of the clearest operational AI wins in video editing. Automatic transcription can generate subtitles quickly enough that captioning is no longer a luxury step reserved for large teams. The current challenge has shifted from "can we transcribe this?" to "how do we correct, style, translate, and deliver captions responsibly across languages and platforms?"

YouTube's caption help pages and Blackmagic's current Resolve release show how automatic captioning is already productized, while the ACL subtitle-translation paper and the 2025 ChatGPT-versus-human subtitling study provide the research caveat: AI makes first-pass captioning and translation much faster, but human review is still important for nuance and final quality.

8. Scene and Object Recognition

Scene and object recognition is becoming a standard post-production utility because it turns footage into searchable material. Instead of scrubbing manually, editors can use AI to find shots, objects, or scene boundaries quickly. This is one of the strongest areas for computer vision in editing because the return is immediate: less logging labor, faster retrieval, and better organization across large footage libraries.

Google Cloud Video Intelligence is a strong official grounding source because its documentation exposes shot change detection, object tracking, and label analysis as routine services rather than lab demos. Inference: searchable video is now a real production capability, especially when editors need time-coded tags and reliable footage retrieval across big archives.

9. Adaptive Streaming

Adaptive streaming is increasingly shaped by AI-style quality optimization rather than blunt bitrate rules alone. Editors and distribution teams now care not only about whether a file plays, but whether it preserves perceptual quality efficiently across devices and network conditions. This is where content-aware encoding matters: the system allocates bits based on what the viewer is likely to notice, not just a fixed technical target.

AWS MediaConvert's QVBR documentation is a good official source because it explains quality-defined variable bitrate as a way to preserve perceptual quality while using bandwidth more efficiently. Inference: adaptive streaming is getting stronger when delivery systems become content-aware instead of treating every scene as equally hard to encode.

10. Predictive Analytics

Predictive analytics is most grounded in editing when it helps teams evaluate retention, pacing, drop-off points, and version performance after release or during testing. That is a much stronger claim than saying AI can predict artistic success from editing choices alone. In practice, the useful shift is that post teams can now learn faster from real audience behavior and feed that back into trailers, cold opens, pacing, and format-specific cuts.

YouTube's audience-retention documentation is a useful operational anchor because it makes clear how much edit quality is now judged through measurable viewer drop-off and key moments. Inference: predictive analytics in editing is strongest as feedback on engagement and cut performance, not as a machine's proclamation of what art will succeed.

Sources and 2026 References

- DaVinci Resolve What's New grounds rough-cut automation, AI audio, captions, and dialogue-matching claims.

- DaVinci Resolve Studio supports smart reframe, object removal, optical flow, grading, and AI finishing features.

- DaVinci Neural Engine grounds AI masking, tracking, and grading-assist claims.

- Flawless product overview and Flawless localization and dubbing support lip-sync localization and dubbing claims.

- Google Cloud shot change detection, object tracking, and label detection ground searchable-footage and scene-recognition claims.

- AWS MediaConvert QVBR and How QVBR works support adaptive-streaming and encoding-efficiency claims.

- YouTube subtitle and caption help grounds current caption workflow practice.

- YouTube audience retention reports and key moments for audience retention ground the predictive-analytics section.

- Translating Movie Subtitles by Large Language Models using Movie-Meta Information is the main research anchor for subtitle translation.

- A Comparative Analysis of ChatGPT and Human Translators in Movie Subtitling supports the human-review caveat for localization.

Related Yenra Articles

- Film Script Analysis adds the narrative and screenplay-planning layer that often precedes editing decisions.

- Digital Asset Management covers the indexing, retrieval, and metadata side of large media libraries.

- Radio and Podcast Production shows the audio-post side of media workflows.

- Interactive Storytelling and Narratives extends media editing into more dynamic and stateful forms of narrative design.