Autonomous vehicles in 2026 are real, but not in the simplistic way the older hype cycle promised. The field has split into two practical layers. One is mainstream ADAS: adaptive cruise, automatic emergency braking, lane centering, blind-spot support, and driver monitoring in consumer vehicles. The other is limited but genuine Level 4 autonomy: driverless ride-hailing and tightly controlled commercial operations within carefully defined service areas.

That distinction matters. IIHS still states plainly that truly driverless cars are not for sale to consumers, while Waymo's public safety and service pages show that driverless service is already operating at scale in mapped, supported environments. In other words, the technology is not imaginary. It is just domain-bound. The strongest systems work inside a defined operational design domain, with layered sensing, fallback systems, safety monitoring, and ongoing fleet operations behind them.

This update reflects the category as of March 15, 2026. It focuses on the parts of the stack that are actually shaping outcomes now: sensor fusion, computer vision, prediction, path planning, ODD discipline, consumer ADAS, rule awareness, fleet health monitoring, cybersecurity, and driver monitoring systems. Inference: the strongest progress in vehicle autonomy is not coming from cars that can supposedly drive anywhere. It is coming from systems that know exactly where their limits are and are engineered around them.

1. Enhanced Perception Systems

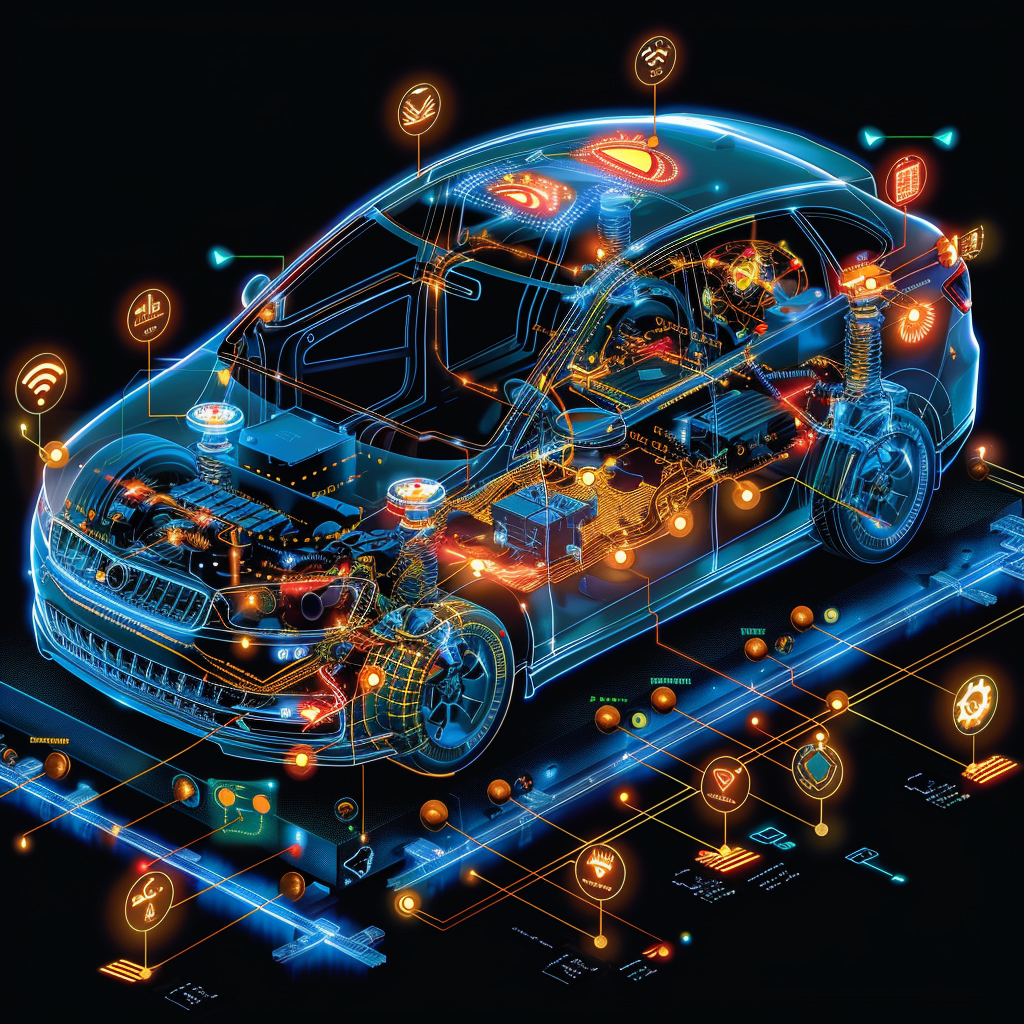

Perception remains the foundation of autonomy, and in 2026 the most credible systems still rely on overlapping sensing rather than one magical input. Production autonomy combines computer vision, radar, lidar, inertial signals, and mapping context so the vehicle can keep working when any one source is degraded by glare, darkness, weather, occlusion, or temporary ambiguity.

IIHS notes that modern front crash prevention systems commonly use cameras, radar, or lidar to watch the road ahead, and Waymo's public technology page emphasizes multiple backup systems plus redundant inertial positioning for vehicle motion tracking. Inference: the real 2026 perception lesson is not that one sensor has won. It is that reliable autonomy still depends on sensor fusion and graceful degradation when the environment gets messy.

2. Improved Decision Making

Decision-making in autonomous vehicles is improving, but the strongest 2026 systems are not betting everything on one opaque end-to-end reflex. They combine learned perception and prediction with constrained planning, explicit fallback behavior, and redundant control paths that can bring the vehicle to a minimal-risk stop when something goes wrong.

Waymo's technology page highlights backup collision detection, redundant steering, redundant braking, and backup power for critical driving functions, while its public safety research catalog emphasizes collision-avoidance testing and safety-readiness methodology rather than marketing claims about universal autonomy. Inference: the 2026 quality bar for decision-making is not simply smooth driving. It is proving that the system can fail safely when reality does not match the nominal plan.

3. Predictive Capabilities

Autonomous vehicles do not only recognize what is around them. They must estimate what road users are likely to do next. That means predicting whether a pedestrian will step out, whether a cyclist will drift around a parked car, whether a vehicle is about to merge, and whether a near-term path will stay open long enough to commit safely.

Waymo's research archive includes work on collision avoidance and on simulated driving behavior in reconstructed fatal crashes within an autonomous vehicle operating domain. That mix matters because it shows how much of AV progress depends on modeling the near future and replaying difficult interactions, not only labeling the present moment. Inference: prediction is one of the places where AV systems become noticeably safer or noticeably brittle, especially in dense urban driving.

4. Optimal Route Planning

Route planning in 2026 is less about choosing the mathematically shortest trip and more about staying inside a validated domain. Real autonomous services plan around mapped roads, known pickup and dropoff logic, local traffic patterns, and the conditions their vehicles are designed to handle. That is why modern autonomy is inseparable from the idea of an ODD.

IIHS says truly driverless cars are not for sale to consumers, while Waymo's rides page makes its mapped service areas and operating process explicit. Inference: the key route-planning truth in 2026 is that reliable autonomy comes from matching the trip to the domain, not from pretending every road, every weather condition, and every edge case can already be handled everywhere.

5. Adaptive Cruise Control

For most people, the first lived experience of "vehicle autonomy" is not a robotaxi. It is adaptive cruise, automatic emergency braking, and highway assistance. These systems matter because they already reduce workload and crash risk, but they should still be understood as driver assistance, not full autonomy.

IIHS says advanced crash-avoidance features are becoming widespread and notes that front crash prevention with automatic braking cuts rear-end crashes in half in real-world studies. A newer MITRE report on model-year 2015-2023 ADAS extends that evidence base across modern systems. Inference: the most important autonomy story for ordinary drivers is still incremental but meaningful safety improvement through ADAS, not a sudden jump to unsupervised driving.

6. Lane Keeping Assistance

Lane keeping and lane centering are now common, but 2026 evidence keeps pointing to the same caution: these features are useful only when paired with good supervision, clear limits, and active driver engagement. Construction zones, faded markings, weather, unusual lane geometry, and complacent users are still major failure points.

IIHS now rates partial automation systems not just on lane-centering performance but on driver monitoring, attention reminders, emergency procedures, and other safeguards, and many high-profile systems still score Marginal or Poor overall. Inference: good lane support is not enough. The stronger 2026 standard is whether the system also manages human overtrust and can handle the moment when assistance stops being enough.

7. Traffic Sign Recognition

Traffic sign recognition is still useful, but by 2026 it is best seen as one ingredient in a larger rule-awareness stack. Vehicles increasingly combine sign reading with map context, localization, lane geometry, and route logic instead of trusting one visual read in isolation. That is especially important for temporary signage, unusual local rules, and signs that are partially occluded or damaged.

Waymo's service and technology materials consistently present driving as a mapped, context-aware operation rather than a raw image-classification task, and IIHS emphasizes that real-world crash avoidance performance depends on the interaction between sensing, software, and deployment conditions. Inference: sign recognition in 2026 is useful, but the systems that work best are the ones that treat road rules as a contextual problem instead of a single-camera problem.

8. Condition Monitoring

Vehicle autonomy is also a fleet-operations problem. Commercial driverless services need AI-based health monitoring, diagnostics, and predictive maintenance so sensors stay calibrated, compute systems stay healthy, batteries and tires stay within acceptable ranges, and rare faults are caught before they become service interruptions or safety issues.

Waymo's public safety impact hub reflects a service operating at meaningful scale through September 2025, and predictive-maintenance literature keeps showing why that matters operationally: AI can reduce unplanned downtime and improve maintenance timing across complex equipment fleets. Inference: the practical success of autonomous vehicles depends not only on driving intelligence, but on whether the fleet can stay healthy, available, and well-calibrated over time.

9. Enhanced Security Features

Autonomous vehicles are connected cyber-physical systems, so their safety case has to include software security, fleet communications, over-the-air updates, supplier dependencies, and backend operations. AI helps here by spotting anomalous behavior and prioritizing suspicious events, but the larger point is that autonomy expands the attack surface as well as the capability set.

Waymo says protecting the Waymo Driver from malicious activity is a core requirement and describes its process as aligned with industry and government-defined best practices. Upstream's 2025 automotive cybersecurity report likewise underscores that vehicle cyber incidents are still rising and increasingly affect fleets and mobility services rather than isolated single vehicles. Inference: autonomous mobility cannot be treated as only a driving problem. It is a cybersecurity and systems-governance problem too.

10. Driver Monitoring

Driver monitoring is one of the clearest dividing lines between responsible partial automation and overpromised autonomy. Whenever a consumer vehicle still expects the human to supervise, take over, or stay attentive, a driver monitoring system is not an optional luxury. It is part of the safety architecture.

IIHS's partial automation safeguards ratings put driver monitoring at the center of their evaluation because many automation-branded systems still have weak safeguards against inattention and overtrust. The institute also says the driver will continue to share driving responsibilities for the foreseeable future. Inference: in consumer vehicles, the most important AI question is often not whether the car can steer itself for a while. It is whether the system can accurately tell when the human is no longer ready to help.

Sources and 2026 References

- Waymo: Waymo Rides.

- Waymo: Waymo Driver.

- Waymo: Safety Impact.

- Waymo: Safety Research.

- arXiv: Collision avoidance testing of the Waymo automated driving system.

- Accident Analysis & Prevention: Waymo simulated driving behavior in reconstructed fatal crashes within an autonomous vehicle operating domain.

- IIHS: Advanced driver assistance.

- IIHS: Partial automation safeguards.

- MITRE: Real-world Effectiveness of Model-year 2015-2023 ADAS.

- PubMed: Adaptive AI and machine learning models for predictive maintenance in industry 4.0.

- Upstream: 2025 Global Automotive Cybersecurity Report.

Related Yenra Articles

- Traffic Management Systems shows how smarter roads and signals complement vehicle-side autonomy.

- Electric Vehicle Optimization connects autonomy to charging, routing, and energy-aware fleet operations.

- Cybersecurity Measures covers the security layer that connected autonomous fleets increasingly depend on.

- Carpooling and Ridesharing Optimization looks at the shared-mobility model that makes robotaxi economics and dispatch more relevant.