Surgical robots are strongest in 2026 when AI is used as a supervised perception, planning, and control layer around the surgeon rather than as a fantasy of an unsupervised machine replacing the operating team. The real progress is in shared autonomy, computer vision, image guidance, force-aware manipulation, workflow intelligence, and structured training.

That distinction matters because surgery is not a benchmark task. It is a high-stakes, dynamic environment with bleeding, tissue deformation, changing views, equipment issues, and patient-specific anatomy. A strong system has to know what it can automate, when it should hand control back, and how to keep the surgeon meaningfully in charge.

This update reflects the category as of March 22, 2026. It focuses on the parts of surgical robotics that feel most credible now: surgeon-in-the-loop control, anatomy recognition, surgical phase intelligence, patient-specific planning, imaging-guided navigation, step-level autonomy in constrained tasks, force feedback, AI-augmented simulation, remote collaboration, and regulated platform maturity.

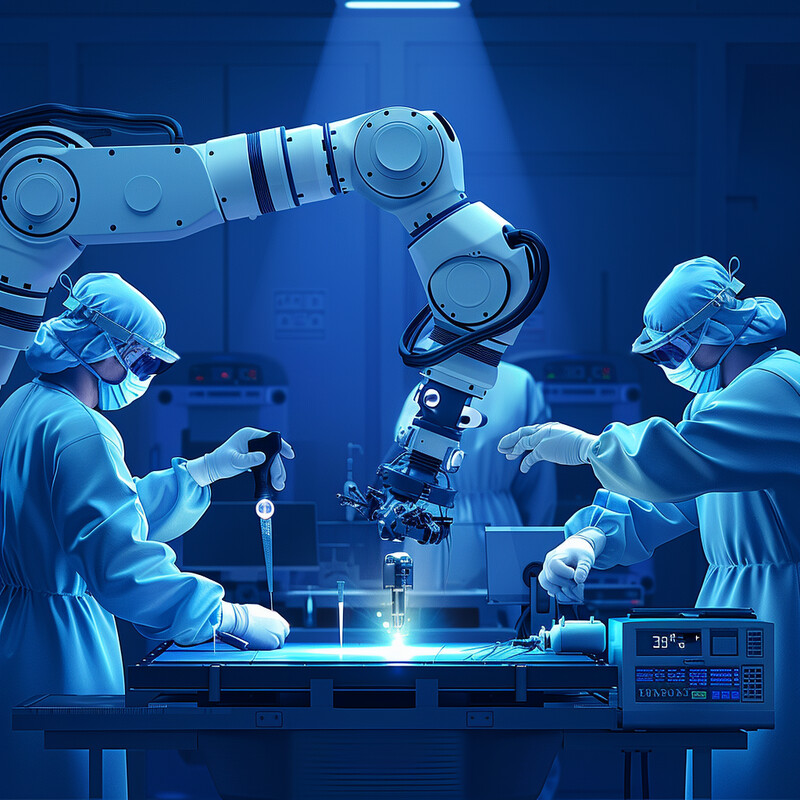

1. Shared Autonomy Instead of Surgeon-Free Autonomy

The most credible direction for surgical robots is not full independence. It is systems that stabilize motion, constrain risk, automate well-bounded subtasks, and still keep the surgeon responsible for goals, supervision, and takeover.

The FDA's current computer-assisted surgical systems page still states that robotically assisted surgical devices cannot perform surgery without direct human control. A 2024 systematic review of FDA-cleared surgical robots likewise found that most systems remained at Level 1 robot assistance, with 86% of cleared devices in that category and only 6% reaching Level 3 conditional autonomy. A 2025 vitreoretinal study then showed the benefits and potential of task autonomy in a realistic surgical eye model, but also concluded that further work is needed for more complex tasks. Inference: the real clinical path is supervised shared autonomy, not near-term surgeon-free operating rooms.

2. Anatomy Recognition as an Intraoperative Safety Layer

One of the clearest wins for AI in surgery is helping teams recognize where they are in anatomy, especially when blood, blur, tools, and unfamiliar views make safe structures harder to identify.

In a 2024 npj Digital Medicine study of endoscopic pituitary surgery, AI assistance improved overall sella recognition from a mean DICE score of 70.7% to 77.5% across 24 participants, and improved safe-entry centroid recognition from 79.2% to 100%. Medical students improved the most, rising from a DICE score of 66.2% to 78.9%, and trainees reached expert-level anatomy recognition proficiency with AI assistance. False positive and false negative errors also dropped, with the reduction in false positives especially important because those errors can lead to damage to critical structures such as the carotid artery. Inference: AI vision is becoming a meaningful safety layer when it helps surgeons and trainees recognize the right anatomy at the right moment.

3. Surgical Phase Recognition and Workflow Intelligence

Surgical robots get smarter when they know what phase of a procedure is happening, which tools are in use, and what tasks are likely to come next.

A 2026 validation study in robot-assisted radical prostatectomy used more than 919,000 frames for development and found that AI surgical phase recognition reached a precision of 0.94 on videos from the development surgeon and 0.83 on cross-surgeon validation videos. Earlier work on edge computing for real-time phase recognition also showed that live phase segmentation can run in the operating room and support workflow optimization. At the commercial layer, Medtronic's Touch Surgery Performance Insights already exposes phase timing metrics and identifies workflow analysis, instrument tracking, scene segmentation, and critical-structure identification as the building blocks for future surgical AI, while explicitly noting that live decision support is not yet released. Inference: phase intelligence is moving from research novelty to practical OR telemetry, but most deployed systems still use it first for monitoring, review, and coaching rather than live autonomous action.

4. Patient-Specific Preoperative Planning

AI improves robotic surgery when it helps teams decide how to approach this patient, this anatomy, and this oncologic or reconstructive tradeoff before the first port ever goes in.

A 2025 systematic review and meta-analysis of robotic radical prostatectomy planning interventions found that positive surgical margins were lower when preoperative planning used clinical nomograms or MRI, with risk ratios of 0.56 for nomogram-based planning and 0.72 for MRI-based planning. A 2026 comparative study in knee arthroplasty then showed that AI-assisted planning combined with robotic execution improved prosthesis prediction accuracy, shortened osteotomy time, reduced blood loss, and improved early pain and function versus conventional planning and surgery. Inference: robotic systems become more clinically useful when AI helps tailor the surgical plan to the actual patient rather than simply automating a generic procedure template.

5. Imaging-Guided Navigation and Augmented Reality

The combination of robotics, navigation, and augmented reality is strongest when it helps surgeons place tools more accurately while staying oriented to a patient-specific 3D plan.

A randomized multicenter clinical trial of augmented-reality spinal navigation enrolled 150 patients and found that excellent-or-good pedicle screw placement rose from 91.7% in the conventional group to 98.0% in the AR-guided group, with superiority confirmed across multiple analysis sets. The study concluded that AR navigation improved implantation accuracy and provided precise intraoperative guidance. Inference: the strongest role for AI-guided surgical robotics is often not freeform autonomy, but tighter alignment between planned and executed trajectories under live image guidance.

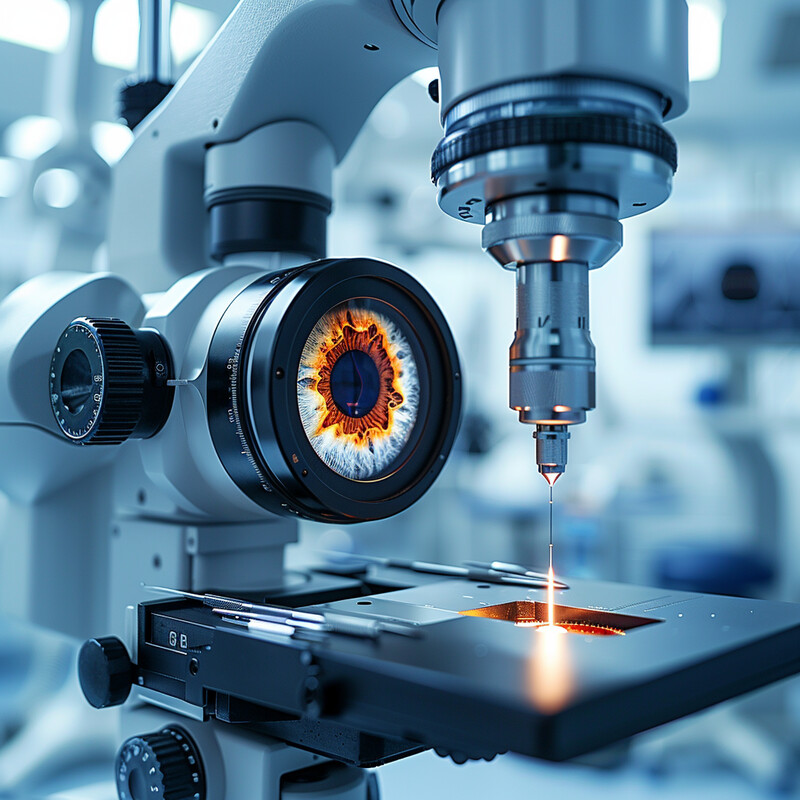

6. Step-Level Autonomy in Constrained Surgical Tasks

Autonomy is becoming more believable when it is demonstrated on bounded surgical steps with explicit limits, not when people blur ex vivo success into a claim that whole human operations are already solved.

In July 2025, Kim and colleagues reported in Science Robotics that the SRT-H system achieved a 100% success rate across eight different ex vivo gallbladders while operating autonomously on a step of cholecystectomy without human intervention. The paper described this as step-level autonomy in a realistic ex vivo procedure and framed it as a milestone toward clinical deployment, not as proof that unsupervised human surgery is solved. Inference: autonomous surgical robots are advancing, but the meaningful 2026 story is robust autonomy in constrained subtasks under tight evaluation, not blanket independence across messy live human cases.

7. Force Feedback and Tissue-Sparing Control

One of the clearest ways to make robotic surgery gentler is to let surgeons feel or see how much force they are applying before tissue injury becomes obvious.

A preclinical study of integrated force feedback in a commercially available robotic system found that during retraction tasks, mean force fell from 3.25 N without feedback to 1.86 N at the high-feedback setting, while maximal force dropped from 10.57 N to 6.77 N. A 2025 pilot clinical study of 68 da Vinci 5 colorectal cases then found that higher force-feedback settings were associated with lower force applied to tissue, with a trend from 1.85 N to 1.54 N, while complex cases still generated higher force overall. Intuitive's real-time Force Gauge update in September 2025 added a live console indicator of relative force at the instrument tip. Inference: force-aware robotics is becoming one of the most practical ways AI and sensing can reduce tissue trauma without trying to automate the surgeon out of the loop.

8. AI-Augmented Simulation and Skill Coaching

AI is already useful in surgery training when it measures motion, timing, force, and errors continuously, then turns those signals into more objective feedback than many trainees can get from limited faculty time alone.

A 2025 randomized crossover trial found that real-time AI-mediated training produced superior learning outcomes to human-mediated feedback across several simulated neurosurgical skill metrics, including higher ICEMS scores and stronger first-session OSATS performance. The authors argued that the best future model is a hybrid one in which AI monitoring informs human instruction. Earlier randomized work had already shown that AI tutoring could match or exceed expert instruction in simulated surgical skills, and a 2026 systematic review of AI-enhanced microsurgical training found benefits in reduced errors and steeper learning curves through real-time feedback. Inference: one of the most mature uses of AI around surgical robots is objective, adaptive coaching that helps surgeons become safer operators faster.

9. Telepresence, Remote Proctoring, and Distributed Expertise

A quieter but important advance is that robotic platforms are turning remote observation, mentoring, and case review into built-in capabilities instead of one-off workarounds.

Medtronic's Hugo clearance materials say the system connects with the Touch Surgery ecosystem for preoperative training, remote tele-proctoring, and AI-powered postoperative case insights, with secure access to case videos seconds after completion. Intuitive's My Intuitive+ platform similarly describes AI-powered video analysis, objective skills assessment, targeted video review, and Telepresence for real-time case observation and mentoring. Inference: surgical robotics is increasingly becoming a connected learning platform, where expertise can be distributed across sites without pretending that the robot itself is ready to replace clinical supervision.

10. Regulated Platform Expansion and Safety Engineering

The field is getting stronger not because autonomy headlines are louder, but because more systems are entering regulated pathways with clearer safety standards, modular designs, and defined clinical indications.

The FDA recognized the updated IEC 80601-2-77 standard for robotically assisted surgical equipment in May 2025, including classes that now span open microsurgery, modular systems, and systems with the user interface in the sterile field. The agency also authorized Distalmotion's Dexter L6 system in October 2024, while Medtronic received FDA clearance for Hugo in December 2025 and Intuitive had already received clearance for da Vinci 5 in March 2024. Inference: the strongest 2026 story in surgical robotics is not sudden full autonomy, but a broader, more standardized, more competitive platform base that can support safer incremental AI features over time.

Related AI Glossary

- Shared Autonomy explains the control model where a person and an intelligent machine divide work instead of giving all authority to either one.

- Human in the Loop covers the review and escalation model that keeps clinicians responsible for high-stakes decisions.

- Computer Vision covers anatomy recognition, instrument tracking, segmentation, and visual understanding in the operating room.

- Teleoperation explains remote control and supervision patterns that still matter as robotic surgery becomes more networked.

- Collaborative Robot (Cobot) helps frame the broader idea of machines working alongside people instead of replacing them outright.

- Simulation-Based Training covers the rehearsal and coaching layer where much of surgical AI is already paying off.

- Workflow Orchestration explains the sequencing of models, tools, reviews, and actions around a real surgical AI workflow.

Sources and 2026 References

- FDA: Computer-Assisted Surgical Systems.

- npj Digital Medicine: Levels of autonomy in FDA-cleared surgical robots.

- PubMed: Evaluation of (Shared) Autonomy in Robot-Assisted Vitreoretinal Surgery Using a Surgical Model.

- npj Digital Medicine: Artificial intelligence assisted operative anatomy recognition in endoscopic pituitary surgery.

- PubMed: AI-based surgical phase recognition in robot-assisted radical prostatectomy.

- PubMed: Edge computing for real-time surgical phase recognition.

- Medtronic: Touch Surgery Performance Insights.

- PubMed: Preoperative planning in robotic-assisted radical prostatectomy.

- PubMed: AI-assisted preoperative planning with robotic total knee arthroplasty.

- PubMed: AR navigation improves pedicle screw placement accuracy.

- PubMed: SRT-H hierarchical framework for autonomous surgery.

- Johns Hopkins: Robot performs first realistic surgery without human help.

- PMC: Evaluation of forces applied to tissues using novel force feedback technology.

- PubMed: Clinical pilot study of da Vinci 5 force feedback.

- Intuitive: Real-Time Surgical Insights for da Vinci 5.

- npj Artificial Intelligence: Combining real-time AI and in-person expert instruction.

- PubMed: AI tutoring vs expert instruction in simulated surgical skills.

- npj Digital Medicine: AI-enhanced microsurgical training systematic review.

- Medtronic: Hugo FDA clearance.

- Intuitive: My Intuitive+.

- FDA: IEC 80601-2-77 recognized consensus standard.

- FDA: Dexter L6 authorization in FDA Roundup.

- Intuitive: da Vinci 5 FDA clearance.

Related Yenra Articles

- Clinical Decision Support Systems covers the recommendation and review layer that increasingly informs surgical planning and perioperative judgment.

- Cancer Treatment Planning extends the imaging, margin, and guidance story into oncology workflows where surgical precision matters most.

- Immersive Skill Training Simulations shows the training side of AI-enabled procedural rehearsal and feedback.

- Biomechanical Modeling for Prosthetics connects to shared autonomy, human-machine control, and sensor-guided movement in another high-stakes clinical domain.

- Personalized Medicine broadens the patient-specific planning layer that increasingly shapes robotic interventions before the case starts.