Artistic creation tools get stronger with AI when the category is treated as a set of working creative systems instead of one fuzzy promise about "AI art." In 2026, the most credible gains come from multimodal image ideation, governed style control, creator-directed recoloring, music continuation, sketch-aware painting surfaces, inpainting, texture generation, disclosure-aware speech synthesis, motion ideation, and narrative systems that keep track of state instead of improvising blindly.

That matters because modern creative workflows are no longer linear. Artists now move across prompt drafting, rough sketches, masked revisions, reference images, voice prototypes, color variants, and branchable story structures in the same project. The strongest tools do not replace authorship. They compress repetitive work, expose more options faster, and make it easier to steer results through prompt engineering, visual references, and lighter-weight model adaptation such as LoRA.

This update reflects the category as of March 20, 2026. It focuses on the parts of the field that feel most real now: reference-aware image generation, style steering with explicit human control, archival and commercial recolor workflows, co-composition, brush-driven sketch interfaces, local image edits, reusable vector and texture assets, dubbed or synthetic character voices, choreography prototyping, and structured interactive storytelling tied to diffusion models, multimodal learning, and provenance-aware creative pipelines.

1. Automated Image Generation

Automated image generation is strongest when it functions as a fast concepting surface for art direction, iteration, and reference exploration rather than as a one-click substitute for finished craft.

OpenAI said on March 25, 2025 that GPT-4o image generation had become a native multimodal capability, with support for detailed prompt following, uploaded-image context, transparent backgrounds, and C2PA provenance metadata. Adobe followed on April 24, 2025 by positioning Firefly as a unified environment for image, video, audio, and vector generation rather than a standalone image toy. Inference: image generation has moved from novelty rendering toward a practical front-end for ideation and art direction, especially when creators need several plausible directions before committing to manual finishing.

2. Style Transfer and Reference Style Control

Style transfer now matters less as a novelty filter and more as a controlled workflow for steering surface qualities, composition cues, and visual mood while keeping the creator in charge of what should and should not carry over.

CVPR 2025 introduced HSI, a holistic style injector for arbitrary style transfer designed to improve content preservation and style application across complex scenes. At the same time, a 2025 CHI paper on illustrators' perception of AI style transfer found that creators saw real economic and authorship risk in systems that reproduce signature aesthetics without permission. Inference: style control is improving technically, but the strongest professional workflows are the ones that pair better controls with clearer consent, attribution, and policy boundaries around living artists' styles.

3. Colorization and Recolor Workflows

Colorization tools matter most when they support human-guided restoration and fast palette exploration, not when they claim to know the one true color answer automatically.

TU Graz described its RE:Color work as a user-controlled system for realistic black-and-white film colorization, with the operator able to guide historically accurate colors and let the model propagate them efficiently across frames. Adobe's Generative Recolor workflow in Illustrator similarly frames recolor as rapid theme and palette exploration from text prompts over existing vector art. Inference: the strongest color workflows are increasingly hybrid: humans define taste, brand, or historical truth, and the model handles the repetitive propagation and variation work.

4. Music Composition and Arrangement Drafting

AI music tools are strongest when they act like co-composition systems that continue, bridge, arrange, or reharmonize ideas instead of pretending to replace the musician's taste, structure, and revision process.

Google DeepMind's April 2025 expansion of Music AI Sandbox emphasized tools for generating instrumental ideas, transforming vocal arrangements, and helping musicians experiment with production faster. Apple's research on controllable music production with diffusion models describes continuation, inpainting, regeneration, smooth transitions, and style transfer in 44.1 kHz stereo audio as realistic production tasks rather than abstract benchmarks. Inference: the practical center of gravity in AI music is co-creation inside existing workflows, especially for arrangement, transition building, and rapid prototyping.

5. Dynamic Brush and Sketch Surfaces

Brush-based AI tools matter because many artists think spatially and gesturally before they think in prompts. The best systems preserve that feeling instead of forcing every idea through text alone.

NVIDIA Canvas continues to frame AI painting as a material-based brush workflow, where artists paint with rough categories like sky, grass, or rock and get a coherent landscape in real time. A 2025 paper called DiffBrush pushed the same direction in research, showing how hand-drawn edits can guide diffusion outputs without retraining the whole model. Inference: sketch-aware interfaces are becoming one of the most important design patterns in creative AI because they bring model power closer to how visual artists already work.

6. Inpainting and Local Image Revision

Local editing is one of the most commercially useful AI art capabilities because creators often need to fix part of an image, not regenerate the whole thing from scratch.

Adobe's current Photoshop documentation treats Generative Fill and reference-image controls as standard editing surfaces for adding, removing, and modifying selected regions while keeping the workflow non-destructive. Research is moving the same way: SmartFreeEdit, posted in 2025, combines multimodal reasoning, region-aware tokens, and hypergraph-enhanced inpainting for more precise instruction-led edits in complex scenes. Inference: inpainting is becoming the practical bridge between classic image editing and modern generative AI, because it turns "make me a new picture" into "fix this exact part."

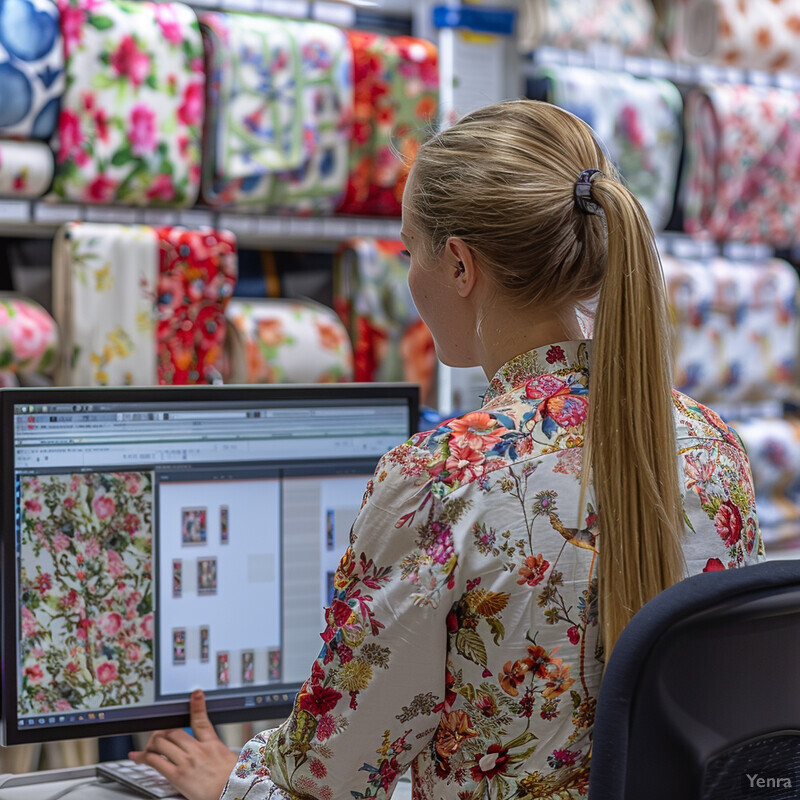

7. Pattern, Texture, and Vector Asset Generation

Pattern and texture generation matters because real creative production depends on reusable assets, not just one-off hero images. Designers need motifs, surfaces, vectors, and variations they can carry into larger systems.

Adobe's Firefly-era vector recoloring work positioned text-driven color and style variation as something creators can apply directly to existing vector artwork inside Illustrator workflows. Research in 2025 pushed further into consistent 3D and surface texture generation through methods like MD-ProjTex and UniTEX, both built around diffusion-based texture synthesis with stronger cross-view consistency. Inference: the field is moving beyond isolated pictures toward reusable asset generation, where AI helps create patterns, materials, and design components that survive beyond a single prompt session.

8. Voice Synthesis and Character Voice Design

Voice AI is strongest in creative work when it speeds up prototyping, dubbing, localization, and character testing while keeping consent, disclosure, and editorial review in the loop.

OpenAI's March 20, 2025 release of new audio models emphasized steerable text-to-speech and stronger speech recognition as building blocks for expressive narration and voice agents. ElevenLabs' Dubbing Studio documentation shows the production side of that shift, with speaker cards, editable transcripts and translations, timeline control, and manual dub workflows for preserving performance across languages. Inference: voice generation is becoming less about novelty cloning and more about structured voice production with speaker management, transcript control, and localization at scale.

9. Choreography Assistance and Motion Ideation

AI choreography tools are strongest when they help creators draft, edit, and rehearse movement ideas for avatars, previs, or live work without pretending to eliminate the choreographer's role.

EDGE introduced editable dance generation from music with joint-wise conditioning and in-betweening, while DanceEditor in 2025 pushed toward iterative dance editing from music plus open-vocabulary descriptions. Studio Wayne McGregor's AISOMA project also remains one of the clearest real-world examples of AI being used as a choreography research tool rather than a replacement performer. Inference: the most credible progress in AI choreography comes from systems that support iteration, partial control, and rehearsal-like exploration instead of single-pass automatic routines.

10. Interactive Storytelling and Narrative Systems

Interactive storytelling gets stronger when AI is used to manage branches, characters, world state, and player-specific narrative possibilities rather than to generate endless but fragile prose.

Narrative Studio and STORY2GAME, both published in 2025, treat interactive narrative as a structured workflow problem involving branching exploration, entity graphs, generated actions, and game-state consistency. The 2025 1001 Nights paper also frames co-creative storytelling as game design rather than ordinary chat. On the production side, Inworld's dynamic-relationship and knowledge-filter tooling shows how AI NPC systems are being packaged with explicit narrative constraints and player-state awareness. Inference: interactive storytelling is getting stronger where it behaves like narrative software with memory and control layers, not just like an improvisational chatbot.

Related AI Glossary

- Generative Artificial Intelligence (GenAI) frames the broader category that now spans images, audio, motion, and interactive media.

- Diffusion Models explain the generative approach behind much of today's image, music, and editing workflow innovation.

- Inpainting covers the masked repair and local-editing workflow that has become central to creator-facing image tools.

- Prompt Engineering matters because most creator tools now depend on precise instructions, references, and constraints.

- LoRA (Low-Rank Adaptation) helps explain the lighter-weight style and domain adapters now common in creative-model customization.

- Multimodal Learning connects the text, image, audio, and motion signals that increasingly coexist inside modern creative systems.

- Speech Synthesis covers the voice-generation layer now used for dubbing, narration, and character prototyping.

Sources and 2026 References

- OpenAI: Introducing 4o Image Generation.

- Adobe Blog: Adobe Firefly: The next evolution of creative AI is here.

- CVPR 2025: HSI: A Holistic Style Injector for Arbitrary Style Transfer.

- arXiv: Copying style, Extracting value: Illustrators' Perception of AI Style Transfer and its Impact on Creative Labor.

- TU Graz: AI Algorithm Puts the Colour Back in Black and White Films.

- Adobe Blog: The future of Illustrator is here: Hue will never be the same.

- Google DeepMind: Music AI Sandbox, now with new features and broader access.

- Apple Machine Learning Research: Controllable Music Production with Diffusion Models and Guidance Gradients.

- NVIDIA: NVIDIA Canvas.

- arXiv: DiffBrush: Just Painting the Art by Your Hands.

- Adobe Help: Edit images with Generative Fill.

- Adobe Help: Use reference images for consistent results.

- arXiv: SmartFreeEdit: Mask-Free Spatial-Aware Image Editing with Complex Instruction Understanding.

- Adobe Blog: Introducing Adobe's generative AI-powered vector recoloring tools, now available in the Firefly beta.

- arXiv: MD-ProjTex: Texturing 3D Shapes with Multi-Diffusion Projection.

- arXiv: UniTEX: Universal High Fidelity Generative Texturing for 3D Shapes.

- OpenAI: Introducing next-generation audio models in the API.

- ElevenLabs Documentation: Dubbing Studio.

- ElevenLabs Help: What is Dubbing?.

- arXiv: EDGE: Editable Dance Generation From Music.

- arXiv: DanceEditor: Towards Iterative Editable Music-driven Dance Generation with Open-Vocabulary Descriptions.

- Studio Wayne McGregor: AISOMA.

- arXiv: Narrative Studio: Visual narrative exploration using LLMs and Monte Carlo Tree Search.

- arXiv: STORY2GAME: Generating (Almost) Everything in an Interactive Fiction Game.

- arXiv: "I Like Your Story!": A Co-Creative Story-Crafting Game with a Persona-Driven Character Based on Generative AI.

- Inworld: Dynamic Relationships Feature.

- Inworld: Knowledge Filters.

Related Yenra Articles

- Text to Image focuses on one of the most visible visual-generation workflows now shaping artistic ideation.

- Film and Video Editing extends the same generative, dubbing, and local-revision ideas into post-production.

- Music Composition and Arranging Tools expands the co-composition side of AI creativity in sound.

- Interactive Storytelling and Narratives explores the adaptive narrative systems that overlap with creative game and media design.